📋 What this article covers: Which companies are in scope of the EU AI Act, what the phased compliance timeline looks like from 2024 through 2027, which obligations kick in at each stage, how scope is determined for non-EU companies, and what the consequences of non-compliance are. If your company uses, develops, deploys, or sells AI systems and you are not sure whether the EU AI Act applies to you - this is your starting point.

The EU AI Act entered into force on 1 August 2024. What that sentence does not tell you is that "entered into force" and "applies to you now" are very different things - the regulation rolls out in phases across three years, and which phase matters for your company depends on what kind of AI you build or use and how you are classified in the value chain.

The EU AI Act entered into force on 1 August 2024. What that sentence does not tell you is that "entered into force" and "applies to you now" are very different things - the regulation rolls out in phases across three years, and which phase matters for your company depends on what kind of AI you build or use and how you are classified in the value chain.

The scope question is also broader than most companies initially assume. The EU AI Act is not just a regulation for AI companies. It applies to any organisation that places an AI system on the EU market, puts one into service within the EU, or - in certain cases - operates an AI system whose outputs affect people in the EU, regardless of where the company itself is based. A US software company selling an AI-powered recruitment tool to European employers is in scope. A Canadian fintech deploying a credit-scoring model to EU customers is in scope. A UK insurer using a third-party AI underwriting tool from a non-EU vendor may still carry obligations under the Act.

This article gives you the complete picture: who is covered, under what role, from when, and what is required at each stage of the timeline.

📌 Jump to section

Who Is Covered - The Four Roles

The EU AI Act does not create a single category of "covered company." It assigns obligations based on the role an organisation plays in the AI value chain. The same company may hold multiple roles simultaneously - and the obligations for each role are different. Understanding which role or roles apply to you is the first step to determining what you actually need to do.

Provider

A provider is any natural or legal person that develops an AI system or general-purpose AI model and places it on the market or puts it into service under their own name or trademark - whether for payment or for free. This is the highest-obligation role under the Act.

Examples: An AI software company selling a document processing tool. A fintech that built and deployed its own credit scoring model. An open-source foundation releasing a foundation model. A company that fine-tuned a base model and released it under their own brand.

Deployer

A deployer is any natural or legal person - except end users - that uses an AI system under their own authority for a professional purpose. Deployers do not develop the AI system; they use one developed by a provider. Deployers carry significant obligations, particularly for high-risk AI systems, even though they did not build what they are using.

Examples: An insurer using a third-party AI tool to assess claims. An HR department using a vendor AI system for CV screening. A hospital using an AI-powered diagnostic tool from a medical device company. A bank using an external AI model for loan decisions.

Importer

An importer is any legal person established in the EU that places on the EU market an AI system bearing the name or trademark of a person established outside the EU. Importers act as a compliance bridge for non-EU providers reaching the EU market.

Examples: A European distributor reselling a US-developed AI tool under the original vendor's branding. An EU subsidiary of a non-EU company that handles EU market distribution of the parent company's AI products.

Distributor

A distributor is any natural or legal person in the supply chain - other than the provider or importer - that makes an AI system available on the EU market without modifying it. Distributors carry lighter obligations than providers, but they are not exempt. They must verify that AI systems they make available bear required conformity markings and documentation before placing them on the market.

Examples: A software marketplace reselling third-party AI tools. An IT services company that bundles an AI product from another vendor into a package solution without modifying the AI component.

Geographic Scope - Does It Apply to Non-EU Companies?

The EU AI Act follows the same extraterritorial logic as the GDPR. It does not matter where your company is incorporated or where your servers are located. What matters is whether your AI system's outputs affect people in the EU.

The EU AI Act follows the same extraterritorial logic as the GDPR. It does not matter where your company is incorporated or where your servers are located. What matters is whether your AI system's outputs affect people in the EU.

Specifically, the Act applies to providers that place AI systems on the EU market or put them into service in the EU - regardless of the provider's establishment location. It also applies to deployers established in the EU, and to providers and deployers established outside the EU when the output of their AI system is used in the EU.

| Scenario | In scope? | Why |

|---|---|---|

| EU company developing and selling AI to EU customers | ✅ Yes | Provider established in the EU; AI placed on EU market |

| US company selling AI software to European businesses | ✅ Yes | Provider placing AI system on the EU market, regardless of establishment location |

| EU company using a third-party AI tool for internal HR decisions | ✅ Yes | EU-established deployer of an AI system affecting EU workers |

| Canadian fintech using AI to make lending decisions for EU customers | ✅ Yes | Output of AI system used in the EU; extraterritorial scope applies |

| US company using AI exclusively for internal US operations with no EU market activity | ❌ No | No EU nexus - outputs do not affect persons in the EU |

| EU research institution developing AI exclusively for scientific research not placed on the market | ❌ No | Research and development exemption applies; not placed on market or put into service |

Non-EU companies that are in scope but have no EU establishment must appoint an authorised representative established in the EU before placing high-risk AI systems on the EU market. This representative becomes the point of contact for market surveillance authorities and carries legal responsibility for ensuring the provider's compliance obligations are met.

The Risk Tiers - What Category Is Your AI System?

The EU AI Act does not apply the same obligations to all AI systems. Obligations are tiered by risk - and what tier your AI system falls into determines both what you need to do and by when. Understanding your risk category is the prerequisite for reading the compliance timeline correctly.

🚫 Prohibited AI - Unacceptable risk

A small category of AI applications that are banned outright. These include real-time remote biometric identification in public spaces for law enforcement (with narrow exceptions), social scoring systems by public authorities, AI that exploits vulnerabilities of specific groups, subliminal manipulation, and AI used for predictive policing based solely on profiling. No company - regardless of size or sector - may deploy these systems.

Applied from: 2 February 2025

⚠️ General-Purpose AI (GPAI) models

Foundation models and large language models trained on broad data and capable of being used across a wide range of tasks. GPAI models - whether open or closed - carry their own obligations around transparency, technical documentation, and copyright compliance. Those designated as "systemic risk" models (trained on compute above 10²⁵ FLOPs) carry additional requirements including adversarial testing and incident reporting.

Applied from: 2 August 2025

🔶 High-risk AI systems

The tier that generates the most obligations and the most compliance work. High-risk AI systems fall into two groups: AI that is a safety component of regulated products (medical devices, machinery, vehicles, aviation) and AI used in specific high-stakes applications listed in Annex III - including biometrics, critical infrastructure, education, employment, essential services, law enforcement, migration, and administration of justice. High-risk AI requires conformity assessment, technical documentation, human oversight measures, data governance, transparency obligations, and registration in the EU AI database.

Applied from: 2 August 2026 (with exceptions for some regulated product AI systems - 2 August 2027)

ℹ️ Limited-risk AI systems

AI systems that interact with humans or generate synthetic content face lighter transparency obligations. Chatbots must disclose that the user is interacting with an AI. Systems generating deepfakes must label content as AI-generated. Emotion recognition and biometric categorisation systems must inform people when these are in use.

Applied from: 2 August 2026

✅ Minimal-risk AI systems

The vast majority of AI applications fall here - spam filters, AI-powered recommendation systems, inventory management tools, predictive maintenance, most productivity software with AI features. No mandatory obligations apply, though voluntary codes of conduct are encouraged.

No mandatory compliance obligations

The Compliance Timeline - What Applies When

The EU AI Act came into force on 1 August 2024. From that date, a phased compliance schedule applies. The phases are not optional stages - each one represents a hard legal deadline after which non-compliance carries enforcement consequences.

Timeline summary

| Date | What applies | Who is affected |

|---|---|---|

| 1 Aug 2024 | Act enters into force; definitions, general provisions, governance bodies established | All in-scope organisations (awareness/preparation phase) |

| 2 Feb 2025 | Prohibited AI practices unlawful | Any company deploying AI - review against prohibited use cases |

| 2 Aug 2025 | GPAI model obligations; national competent authorities operational | Foundation model and LLM developers and releasers |

| 2 Aug 2026 | High-risk AI (Annex III); limited-risk transparency; EU AI database | Providers and deployers across employment, credit, healthcare, education, public services, law enforcement |

| 2 Aug 2027 | High-risk AI embedded in regulated physical products | Medical device, automotive, aviation, machinery manufacturers |

What the Timeline Means for Your Type of Company

The obligations that hit you, and when, depend not just on your industry but on your role in the AI value chain and what your AI systems are used for. Here is what the timeline looks like in practice for the most common company profiles.

🏦 Banks, insurers, and financial services firms

Credit scoring, fraud detection, algorithmic trading, insurance underwriting, loan decisioning, and customer risk profiling all potentially touch high-risk Annex III categories - specifically the provision of essential private services and access to essential resources. Financial institutions using AI in these areas face the August 2026 deadline and must have conformity assessments, human oversight procedures, and technical documentation in place by then. Firms also need to assess whether their AI vendors are compliant and update procurement contracts accordingly. The intersection of EU AI Act obligations with existing EBA and DEIOPA requirements around model risk and algorithmic accountability makes financial services one of the most complex compliance contexts.

🏥 Healthcare and MedTech

AI that is a safety component of a medical device falls under the regulated products category with the August 2027 deadline. Standalone AI used for medical diagnosis, treatment recommendation, or triage - not embedded in a regulated device - falls under Annex III and the August 2026 deadline. MedTech companies need to determine early which category their products fall into because the conformity assessment routes and timelines differ significantly. Companies already CE-marked under the Medical Device Regulation face an additional layer of interaction between the MDR conformity route and the AI Act requirements.

💼 HR software and recruitment platforms

AI used for recruitment, CV screening, shortlisting, candidate scoring, and employment decisions is explicitly listed in Annex III as a high-risk application. This applies to both providers (companies building and selling HR AI tools) and deployers (employers using those tools). The obligation falls on both sides of the transaction. August 2026 is the deadline. HR software providers should already be building compliant products; employers using AI in hiring should already be assessing their vendor compliance and their own human oversight obligations.

🤖 AI platform companies and foundation model providers

Companies releasing GPT-style models, open-source foundation models, or large-scale AI platforms face the August 2025 GPAI deadline. This means technical documentation, transparency information for downstream providers, and copyright compliance policies must be in place twelve months after the Act entered into force. Companies whose models exceed the systemic risk compute threshold face additional obligations including adversarial testing and mandatory incident reporting to the EU AI Office. This deadline has already passed its preparation window - companies in this space need to be in compliance now.

🏫 EdTech and educational institutions

AI used to determine access to education, assess students, or monitor students during examinations is listed in Annex III. EdTech companies building AI for these purposes - and educational institutions deploying them - face August 2026. Importantly, AI used solely for supplementary educational support tools (not in decisions about access, progression, or assessment) is likely to fall in the minimal-risk category with no mandatory obligations.

Exemptions and Exclusions

Not every use of AI triggers EU AI Act obligations. The following categories are explicitly excluded or carry significantly reduced obligations.

| Category | Scope of exemption | Limitations |

|---|---|---|

| Research and development | AI developed and tested for scientific research not yet placed on the market | Exemption ends the moment the system is released or deployed in a real-world context |

| Personal and non-professional use | Individuals using AI for purely personal purposes | Does not apply to organisations; does not apply to AI used in a professional context even if the individual is the end user |

| National security and defence | AI systems developed or used exclusively for military, national security, or defence purposes | Applies only where the use is exclusively for the exempt purpose; dual-use cases remain in scope |

| Micro-enterprises (deployers only) | Some reduced obligations for micro-enterprise deployers of high-risk AI in employment contexts | Micro-enterprise status does not exempt providers; core high-risk obligations still apply to deployers in most contexts |

| Open-source GPAI models (partial) | Open-source GPAI models are exempt from some documentation and transparency obligations | Exemption does not apply to open-source models that are designated systemic risk; prohibited use case rules always apply |

Penalties for Non-Compliance

The EU AI Act establishes a three-tier penalty structure, with fines calibrated to the severity of the violation and the size of the company. Fines are assessed by national market surveillance authorities and, for GPAI models, by the EU AI Office.

Violating prohibited AI practices or providing false information to authorities

Violations of high-risk AI obligations, GPAI model obligations, and deployer obligations

Supplying incorrect, incomplete, or misleading information to authorities

The percentage-of-turnover figure applies when it is higher than the fixed amount - which, for any company with global revenues above €500 million, means the percentage is the binding cap. For SMEs and startups, fines are capped at the lower of the fixed amount or the relevant percentage of turnover. National competent authorities also have the power to order the withdrawal of non-compliant AI systems from the EU market and to impose temporary bans on access.

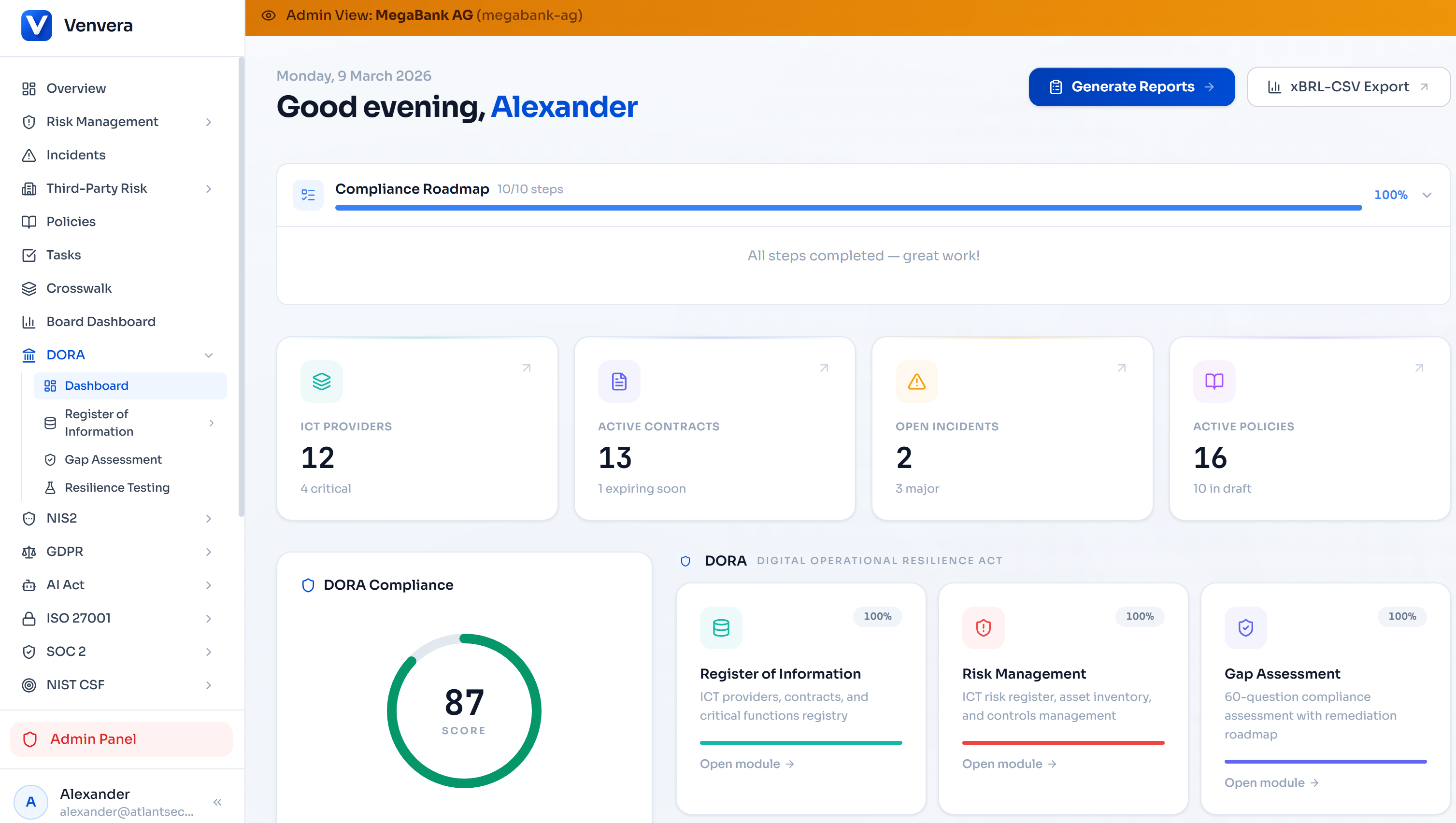

DORA-compliant. EU AI Act aware.

Venvera is built for EU-regulated financial entities navigating overlapping regulatory obligations - DORA, EU AI Act, and beyond. Purpose-built for European compliance, with EU data residency as standard.

Learn more → Venvera.comFrequently Asked Questions

If I use a third-party AI tool, does the EU AI Act apply to me?

Yes, if you are an EU-established organisation using the tool for professional purposes, you are a deployer under the Act. Deployers of high-risk AI systems have significant obligations - including ensuring the AI is used in accordance with its intended purpose, implementing appropriate human oversight, monitoring performance, and informing employees when AI systems affect them. The provider's compliance does not extinguish your obligations as a deployer.

Does the EU AI Act apply if my company is based outside the EU but has EU customers?

Yes. The Act applies to providers placing AI systems on the EU market or into service in the EU, and to providers and deployers outside the EU when their AI system's output is used in the EU. Non-EU companies in scope must appoint an EU-based authorised representative for high-risk AI systems if they have no EU establishment.

How do I know if my AI system is high-risk?

High-risk systems fall into two groups. The first covers AI that is a safety component of a product governed by EU harmonisation legislation listed in Annex I (medical devices, machinery, vehicles, and so on). The second covers AI used for specific purposes listed in Annex III - biometric identification, critical infrastructure management, education, employment decisions, essential services access, law enforcement, migration and asylum, and administration of justice. If your AI system is used for any of these purposes, it is high-risk. The AI Act also empowers the Commission to update Annex III over time, so ongoing monitoring of additions is necessary.

Is ChatGPT covered by the EU AI Act?

ChatGPT - and similar large language models made available in the EU - falls under the GPAI model provisions of the Act. OpenAI, as the provider placing the model on the EU market, must comply with the GPAI chapter obligations from August 2025. If ChatGPT is used by an EU organisation for a high-risk purpose (such as in a system making employment decisions or assessing creditworthiness), the deployer also carries high-risk obligations even though the underlying model itself is general-purpose.

What does "placing on the market" mean for a SaaS AI product?

For SaaS AI products, "placing on the market" includes making the AI system available to EU customers via a subscription or API, regardless of where the infrastructure runs. If your SaaS product is accessible to and used by EU customers, it is on the EU market. You do not need to have a physical product or ship software on media - digital availability is sufficient to trigger in-scope status.

Are open-source AI models exempt from the EU AI Act?

Partially. Open-source GPAI models are exempt from some of the GPAI chapter obligations - specifically certain documentation and transparency requirements. However, open-source models are not exempt from the prohibited AI rules, and open-source GPAI models that are designated as systemic risk (based on the training compute threshold) carry the same systemic risk obligations as closed models. The open-source exemptions are narrower than many initially assume.

Written by the Venvera compliance team. Venvera is a purpose-built compliance platform for European financial entities. This article reflects the EU AI Act as enacted and the compliance timeline as of February 2026. Last updated: February 2026.