📋 What this article covers: How the EU AI Act applies to healthcare AI specifically, the two compliance tracks for medical AI systems, which systems are high-risk and why, how the AI Act interacts with the Medical Device Regulation and IVDR, what hospitals and clinical institutions must do as deployers, the compliance timeline for healthcare, and the most common classification mistakes in this sector.

I had a conversation recently with the chief medical officer of a German digital health company that had been developing an AI-powered chest X-ray analysis tool for two years. They had CE-marked the product as a Class IIa medical device under the MDR. They were proud of that - it had taken eighteen months and a significant budget. When I asked what they had done about the EU AI Act, there was a long pause.

"We assumed the MDR covered it," he said. "We did a conformity assessment. Isn't that the same thing?"

It is not the same thing. The MDR assesses the safety and clinical performance of the device as a medical product. The EU AI Act assesses whether the AI system embedded in the device meets a separate set of requirements around transparency, data governance, human oversight, risk management, and technical documentation - requirements that the MDR conformity process does not fully address. Passing one does not mean passing the other. For most medical AI systems, both apply simultaneously and the compliance programmes need to be coordinated.

Healthcare is one of the sectors where the EU AI Act lands heaviest. The combination of Track 1 (AI as a safety component of a regulated medical device) and Track 2 (AI used in high-stakes healthcare decisions) means that a very large proportion of clinical AI systems are high-risk. This article gives you the complete picture.

📌 Jump to section

- The two tracks for healthcare AI

- Track 1 - AI embedded in medical devices

- Track 2 - Standalone clinical AI under Annex III

- Which specific systems are high-risk

- How the AI Act and MDR interact

- What hospitals and clinics must do as deployers

- The compliance timeline for healthcare AI

- Classification mistakes specific to healthcare

The Two Tracks for Healthcare AI

Healthcare AI can reach high-risk status through either of the EU AI Act's two classification tracks - and in many cases, through both simultaneously. Understanding which track applies to your system is the starting point for every other compliance decision, because the tracks carry different conformity assessment routes and different compliance deadlines.

AI embedded in a CE-marked medical device

AI that constitutes a safety component of a product regulated under the MDR (2017/745) or IVDR (2017/746), or that is itself a medical device with an AI component.

Standalone clinical AI listed in Annex III

AI used in clinical decision-making - diagnosis, treatment, triage, clinical resource management - that is not a regulated medical device but significantly influences individual patient outcomes.

The one-year difference in deadlines matters practically. Medical device manufacturers integrating AI into CE-marked products have until August 2027. Companies offering standalone clinical AI software that influences patient decisions - without being a regulated medical device - face August 2026. Hospitals deploying either type as users have August 2026 deployer obligations regardless of which track the product falls under.

Track 1 - AI Embedded in Medical Devices

Track 1 applies when an AI system forms a safety component of a product regulated under EU medical device legislation. In the healthcare context, this means any AI that is integral to a CE-marked medical device under the MDR or IVDR, where the AI component performs a function that is material to the device's clinical purpose or safety profile.

The medical device classifications under MDR - Class I through Class III - do not map directly onto the AI Act's high-risk classification. A Class I MDR device with an AI component that performs a safety-relevant function is still a Track 1 high-risk AI system under the Act. The device classification governs the conformity assessment route under MDR; the AI Act imposes its own requirements on top.

| Medical AI example | MDR/IVDR classification | AI Act Track 1? |

|---|---|---|

| AI analysing retinal images to detect diabetic retinopathy | MDR Class IIa or IIb (medical device for diagnosis) | ✅ Yes |

| AI interpreting ECG signals to detect arrhythmias | MDR Class IIa or III | ✅ Yes |

| AI in an in-vitro diagnostic device interpreting blood test results | IVDR Class B, C, or D | ✅ Yes |

| AI controlling drug dosage in an infusion pump | MDR Class IIb or III | ✅ Yes |

| AI providing real-time navigation guidance during robotic surgery | MDR Class III (active therapeutic device) | ✅ Yes |

| AI generating a patient-facing exercise plan in a general wellness app with no diagnostic claims | Not a regulated medical device | ❌ No (Track 1) |

Track 2 - Standalone Clinical AI Under Annex III

Not all clinical AI is a regulated medical device. A large and growing category of healthcare AI systems sits outside MDR/IVDR scope - clinical decision support tools, AI used for resource allocation, patient triage algorithms, administrative AI that influences care decisions, and AI used in clinical research contexts. These systems are not CE-marked medical devices, but many of them significantly influence individual patient outcomes. For these, Track 2 applies.

The relevant Annex III category for standalone clinical AI is not explicitly labelled "healthcare." Instead, it falls under category 5 - access to essential private services and benefits - and potentially category 1 (biometrics in clinical contexts) or category 2 (critical infrastructure, for AI managing life-critical health infrastructure). The essential services framing covers AI that determines or significantly influences access to healthcare - including who receives treatment, in what priority, and under what conditions.

The key qualifier is whether the AI system makes or significantly influences decisions that have legal or similarly significant effects on individual patients - affecting access to care, treatment prioritisation, or the clinical pathway a patient follows. AI that does this is high-risk under Track 2 regardless of whether it carries MDR CE-marking.

Which Specific Healthcare AI Systems Are High-Risk

Working through common healthcare AI use cases against the two tracks produces the following picture. This is not exhaustive but covers the systems that generate the most classification questions in practice.

AI that analyses radiology images (X-ray, CT, MRI, PET), pathology slides, dermatology images, or ophthalmology scans to detect, identify, or characterise disease. Typically CE-marked as MDR SaMD - Track 1. Compliance deadline August 2027.

AI that generates treatment recommendations, drug dosing suggestions, or differential diagnoses that a clinician acts on in patient care. If the AI output influences a clinical decision about a specific patient, it is high-risk - whether CE-marked (Track 1, August 2027) or not (Track 2, August 2026).

AI used in emergency departments, ICUs, or outpatient settings to score patient acuity, prioritise treatment order, or flag patients requiring urgent escalation. Determines access to care - Track 2 high-risk regardless of MDR status. Deadline August 2026.

AI that generates patient risk scores influencing clinical interventions - early warning scores, sepsis prediction tools, readmission risk models. If the output drives a clinical decision about that patient's care, it is high-risk under Track 2. Deadline August 2026.

AI that controls, guides, or provides real-time intraoperative assistance in surgical systems. MDR Class IIb or III. Track 1 high-risk. Deadline August 2027.

AI embedded in or constituting an in-vitro diagnostic device - interpreting genetic sequencing data, analysing pathology samples, reading point-of-care test results. Governed by IVDR. Track 1 high-risk. Deadline August 2027.

The following healthcare AI use cases are more likely to fall outside the high-risk tier - though each case requires its own assessment.

AI that optimises appointment scheduling, bed management, or staff rostering without influencing individual clinical decisions - not determining access to care at the individual patient level.

AI in consumer apps making general health recommendations (step targets, sleep coaching, nutrition suggestions) that do not constitute medical advice and are not CE-marked medical devices.

AI that helps clinicians search or summarise medical literature without generating patient-specific recommendations - a research support tool, not a clinical decision system.

AI that transcribes, structures, or summarises clinical notes without generating clinical recommendations or influencing patient care decisions - though note that if the output feeds into a system that does influence care decisions, the chain of influence matters.

How the AI Act and MDR Interact

This is the question that trips up most medical device companies. The short answer is that the two regulations are complementary but not duplicative - compliance with one does not discharge compliance with the other, and the requirements do not fully overlap.

| Requirement area | MDR/IVDR | EU AI Act | Overlap? |

|---|---|---|---|

| Clinical performance / safety | ✅ Comprehensive | Partial | MDR more comprehensive on clinical evidence; AI Act adds accuracy, robustness requirements |

| Technical documentation | ✅ Required | ✅ Required | Significant overlap but AI Act requires additional AI-specific fields - training data governance, model architecture details, bias assessment |

| Risk management | ✅ ISO 14971 | ✅ Required | ISO 14971 risk management satisfies much of the AI Act risk management requirement; AI-specific risk categories (bias, data drift) require additional work |

| Human oversight by design | Partial (usability) | ✅ Explicit requirement | AI Act's human oversight requirement is more specific than MDR usability requirements - requires design enabling humans to understand, monitor, and override the AI |

| Training data governance | Limited | ✅ Comprehensive | AI Act adds explicit requirements for training data relevance, representativeness, and bias assessment that MDR does not fully address |

| Post-market surveillance | ✅ Required | ✅ Required | Strong overlap; MDR PMS systems can be extended to cover AI Act post-market monitoring with targeted additions |

| EU database registration | ✅ EUDAMED | ✅ EU AI database | Two separate registrations in two separate databases - EUDAMED for MDR, the EU AI database for the AI Act. Not interchangeable. |

| Conformity assessment body | Notified Body (for Class IIa+) | Notified Body (for some biometric/law enforcement AI) or self-assessment | Most medical AI systems can self-assess under the AI Act even if they required a Notified Body under MDR - but the self-assessment must be rigorous and documented |

The practical takeaway for medical device manufacturers is this: use your existing MDR compliance work as a foundation and identify what the AI Act requires that MDR does not cover. Training data governance, the specific human oversight design requirement, the EU AI database registration, and the AI Act's technical documentation format are the areas most likely to require new work on top of MDR compliance.

What Hospitals and Clinics Must Do as Deployers

A hospital that purchases and uses a clinical AI system from a vendor is a deployer under the EU AI Act. The vendor being compliant as a provider does not eliminate the hospital's own obligations - it only shifts the burden of certain responsibilities to the vendor, while the hospital retains its own deployer duties.

For healthcare deployers, the obligations that apply from August 2026 include the following.

Implement genuine human oversight

Ensure that clinical staff who use AI outputs understand the system's limitations, know how to interpret and challenge its recommendations, and have the authority and practical ability to override or disregard AI outputs. Governance frameworks for AI use in clinical settings must be in place - not just a sign-off policy, but evidence that oversight is actually exercised.

Use the system only for its intended purpose

High-risk AI systems must be deployed in accordance with the provider's instructions for use. A hospital that uses a diagnostic imaging AI tool for a purpose the vendor has not validated - for example, applying a breast cancer detection model to a different imaging context - may become a provider rather than a deployer, acquiring the full provider obligation set.

Inform affected patients

Where a high-risk AI system is used to make or significantly assist a decision about an individual patient, that person must be informed that AI has been used. This transparency obligation falls on the deployer - the hospital or clinic - not on the AI vendor.

Monitor performance and report incidents

Deployers must monitor high-risk AI systems in operational use and report serious incidents or malfunctions to the provider and, where the incident has caused harm, to market surveillance authorities. Healthcare deployers should integrate AI incident reporting into their existing clinical incident management frameworks.

Register in the EU AI database (public deployers)

Public authorities - including publicly funded hospitals and health systems - that deploy high-risk AI systems must register the deployment in the EU AI database before putting the system into service. Private healthcare providers are not subject to this specific obligation but may be required to cooperate with registration requests.

The Compliance Timeline for Healthcare AI

| Date | What applies | Who is affected |

|---|---|---|

| 2 Feb 2025 | Prohibited AI practices unlawful - includes biometric surveillance and social scoring systems | All healthcare organisations deploying any AI |

| 2 Aug 2026 | High-risk AI obligations for Annex III systems - deployer obligations for hospitals; provider obligations for standalone clinical AI not regulated as a medical device | Hospitals and clinics using clinical AI; providers of standalone clinical AI SaaS not CE-marked under MDR |

| 2 Aug 2027 | High-risk AI obligations for Track 1 systems - AI embedded in CE-marked medical devices | Medical device manufacturers with AI components; IVD companies; SaMD providers with MDR CE-marking |

Classification Mistakes Specific to Healthcare

CE-marking demonstrates MDR conformity. It does not constitute an EU AI Act conformity assessment. The two assessments address different requirements and must be conducted separately, though the documentation for both can be coordinated and built in parallel to reduce duplication of effort.

The presence of a clinician in the loop does not remove high-risk classification. If the AI output significantly influences the clinical decision - including by framing the information the clinician sees or by generating a score that shapes their assessment - the system is high-risk. The AI Act requires that the human oversight be meaningful, which assumes the system is high-risk and must be designed to support genuine oversight.

The vendor's compliance as a provider does not discharge the hospital's deployer obligations. Human oversight implementation, patient notification, incident monitoring, and - for public hospitals - EU AI database registration are deployer obligations that cannot be contractually transferred to the vendor. Hospitals must build their own AI governance frameworks and not rely on vendor compliance as a substitute.

Over-classification also creates problems. Companies over-classifying general wellness apps as high-risk create unnecessary regulatory burden without delivering any compliance benefit. The classification should be based on whether the AI generates outputs that influence access to healthcare or individual clinical decisions - not on whether the app is health-related in a general sense.

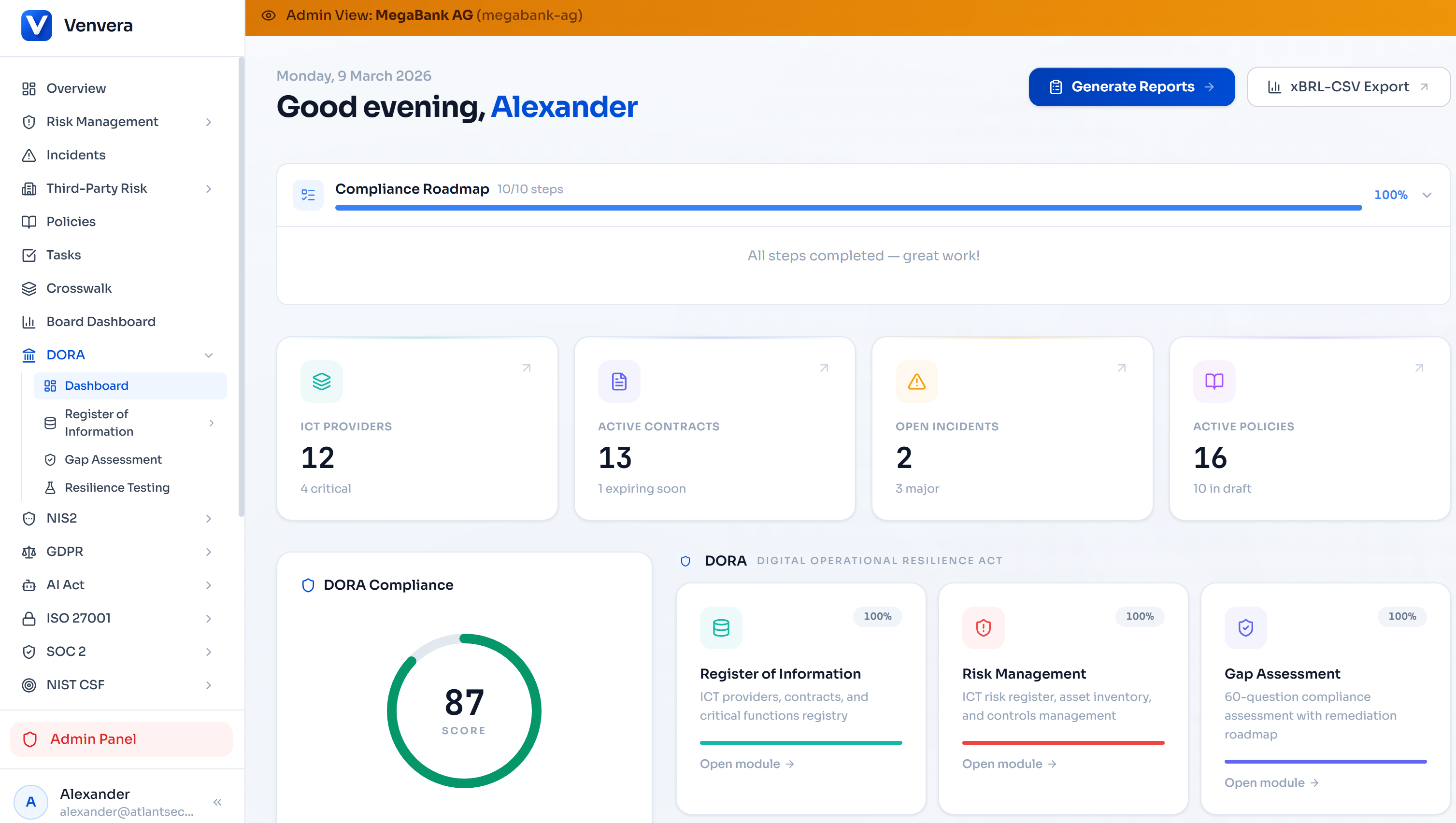

Managing EU AI Act compliance alongside DORA and MDR?

Venvera is purpose-built for European regulated entities navigating overlapping compliance obligations - with EU data residency, structured gap assessment, and documentation tooling built for the regulatory environment you are actually working in.

Learn more → Venvera.comFrequently Asked Questions

We built an AI model for internal clinical research. Does the EU AI Act apply?

Research AI that is not placed on the market and not used in clinical decisions affecting real patients falls within the research exemption. However, if your research AI is used in retrospective analysis that informs clinical protocols, or if outputs are used in any way that feeds into real patient care, the exemption does not apply. The distinction between research use and operational use requires honest assessment of how the AI output actually flows into clinical practice.

Does a Class I MDR device with an AI component fall under Track 1?

Potentially yes, if the AI constitutes a safety component of the device - meaning a failure or degraded performance of the AI component could affect the safety of the product or of persons. MDR device class and AI Act high-risk classification are determined by different criteria. A Class I device with a simple AI feature that does not perform any safety function is unlikely to be Track 1; a Class I device whose AI component influences a clinical output is more likely to qualify.

Our hospital uses a US-developed clinical AI tool. Do we have AI Act obligations?

Yes. As an EU-established deployer of a high-risk AI system, you have deployer obligations regardless of where the vendor is based. The vendor should be compliant as a non-EU provider (including appointing an authorised representative in the EU for high-risk AI systems). If the vendor is not compliant, your procurement contract should address this - and you should consider whether continuing to use the system creates regulatory exposure for your organisation.

What does meaningful human oversight look like for diagnostic AI in practice?

Meaningful oversight means that the clinician reviewing an AI output genuinely understands what the AI is telling them, can access information about the system's confidence level and known limitations, and makes an independent clinical judgement rather than simply validating the AI's output. Workflows where clinicians feel unable to override AI recommendations - due to time pressure, lack of training, or institutional culture - do not constitute meaningful oversight even if they technically involve a human step. Deployers are responsible for ensuring their workflows support genuine oversight, not just formal compliance.

Can we use our existing MDR post-market surveillance system for AI Act post-market monitoring?

Yes, with targeted additions. Your MDR post-market surveillance process covers much of what the AI Act requires for post-market monitoring - adverse event reporting, systematic review of performance data, and feedback integration. The main gaps to address are AI-specific: monitoring for data drift (where the AI's performance degrades because the input data distribution has changed), bias monitoring across patient demographics, and the AI Act's specific serious incident reporting channel to market surveillance authorities distinct from MDR vigilance reporting to the NCA medical device authority.

Written by the Venvera compliance team. Venvera is a purpose-built compliance platform for European regulated entities. This article reflects the EU AI Act as enacted and the regulatory position as of February 2026. It is provided for informational purposes and does not constitute legal advice. Last updated: February 2026.