The most expensive mistake I've seen compliance teams make with DORA isn't getting a technical requirement wrong. It's spending six months working intensely on the wrong things while critical gaps sit unaddressed in areas they haven't even evaluated yet.

A payment institution I spoke with last year had built an excellent ICT incident response procedure - detailed, well-tested, aligned to DORA's classification criteria. What they hadn't done was map a single sub-outsourcing arrangement for any of their 47 ICT providers. Their Register of Information was structurally incomplete in a way that would fail NCA validation, and they had no visibility into that gap because their gap assessment had focused on the policy and procedure layer rather than the data and reporting layer.

A good DORA gap assessment doesn't just ask "have you done the thing?" It scores maturity across every domain that DORA touches, weights the gaps by regulatory priority and remediation effort, and produces an output that your management team can actually act on. This article gives you that framework - a scoring methodology you can run yourself, domain by domain, and use to produce a gap assessment report that tells you exactly where you stand and what to fix first.

📋 What this article gives you: A complete domain-by-domain DORA gap assessment framework with scoring criteria for each area, a maturity model you can apply consistently across your organisation, guidance on how to weight and prioritise the gaps you find, a ready-to-use scoring template structure, and a clear view of what "good" looks like in each domain so you can set realistic targets.

📌 Jump to section

Why Most DORA Gap Assessments Fall Short

The gap assessment approaches most compliance teams use - whether developed internally or inherited from a consultant - tend to share three structural weaknesses that limit their usefulness.

They conflate existence with adequacy. Asking "do you have an ICT risk management framework?" and recording "yes" tells you almost nothing about whether that framework meets DORA's specific requirements. A framework written in 2019 to satisfy EBA outsourcing guidelines may have no coverage of the new DORA requirements around ICT concentration risk, third-party monitoring, or proportionality. Existence-based assessments produce false comfort.

They focus on the policy layer and ignore the operational layer. DORA is unusual among EU financial regulations in that it has specific, verifiable operational outputs - particularly the Register of Information and the xBRL-CSV submission. A gap assessment that only evaluates whether policies and procedures exist, without evaluating whether the organisation can actually produce those outputs, misses the most immediate regulatory risk.

They don't produce a prioritised action plan. A list of gaps without a clear hierarchy of urgency and effort is difficult for management to act on. Different gaps carry different levels of regulatory risk, and remediation resources are always finite. An effective gap assessment must tell you not just what's missing but in what order to fix it.

The Maturity Scoring Model

Use a four-level maturity scale for each domain. Four levels - rather than five or ten - gives you enough granularity to distinguish meaningfully different states without creating false precision that the data doesn't support. Each level has a precise definition so that scores are consistent across domains and across assessors.

The requirement is either unknown to the organisation, known but not started, or addressed only through ad-hoc efforts with no documentation, ownership, or repeatability. A score of 1 means the gap is significant and remediating it requires building from scratch.

Something is in place - a policy exists, a process has been started, some data has been collected - but it does not meet DORA's requirements in full. Coverage is incomplete, documentation is inadequate, or the approach hasn't been tested. A score of 2 means work is underway but significant remediation is still needed.

The requirement is addressed in a documented, structured way that aligns with DORA's text. Policies, procedures, and data exist and are maintained. However, the approach hasn't been tested under realistic conditions, evidence of effectiveness is limited, or the process depends heavily on specific individuals rather than embedded practices. A score of 3 is regulatory baseline.

The requirement is met comprehensively, evidence of effectiveness exists, the approach has been tested or audited, and continuous improvement processes are in place. The organisation could demonstrate compliance to an NCA supervisor with confidence. A score of 4 is the target for high-priority domains.

One important calibration note: a score of 3 means you meet the regulatory baseline - not that you are done. Supervisory scrutiny, TLPT exercises, and NCA inspections will probe beyond baseline adequacy. For domains that carry the highest regulatory enforcement risk, targeting a 4 is worth the investment.

The Seven DORA Domains - Scored

DORA's requirements organise naturally into seven domains. For each domain, the following sections give you the scoring criteria for levels 1 through 4, the key evidence you would expect to see at each level, and the specific sub-requirements that are most commonly underdeveloped in first assessments.

Weighting and Prioritisation

Not all gaps carry equal regulatory weight. Once you've scored each domain, apply a weighting that reflects NCA enforcement priority and the practical consequence of a gap in each area. The following weightings reflect the relative regulatory stakes based on the domains NCAs have focused on most in supervisory activity since DORA became applicable.

| Domain | Regulatory weight | Rationale |

|---|---|---|

| D5 - Register of Information | Critical | Direct NCA submission with hard deadlines; failures are immediately visible to regulators; first area of supervisory focus |

| D2 - Incident Management | Critical | 4-hour notification failures are immediately apparent; regulatory consequences of missed reporting are severe and well-documented |

| D4 - ICT Third-Party Risk | High | Systemic risk concern for regulators; Article 30(2) gaps are verifiable through contract review; concentration risk a macro-prudential priority |

| D7 - Governance | High | Management body accountability is a recurring NCA supervisory focus; individual accountability gaps are reputationally and legally significant |

| D1 - ICT Risk Management | Medium | Foundational requirement; NCA inspections will review; longer remediation timeframe but high baseline adequacy expected |

| D3 - Testing | Medium | Annual testing is a firm obligation; TLPT is significant for in-scope entities but applies only to a subset |

| D6 - Information Sharing | Lower | Voluntary; NCA scrutiny lower relative to other domains; good-faith assessment and participation decision sufficient for most entities |

Turning Your Scores into an Action Plan

Once you've scored each domain and applied the weighting, you have two inputs for your action plan: the gap size (how far below 3 each domain scores) and the regulatory weight (how urgently that gap needs to close). Plotting these against each other gives you a prioritisation grid.

| Gap size → Reg. weight ↓ | Score 1 (Large gap) | Score 2 (Moderate gap) | Score 3+ (At baseline) |

|---|---|---|---|

| Critical weight | 🔴 Immediate Stop everything else | 🟠 Urgent Next 30 days | 🟡 Monitor Maintain and improve |

| High weight | 🟠 Urgent Next 30 days | 🟡 Planned Next 60-90 days | 🟢 Monitor Continuous improvement |

| Medium / Lower weight | 🟡 Planned Next 90 days | 🟢 Roadmap Next 6 months | 🟢 Done Sustain |

Any domain scoring 1 with Critical or High regulatory weight should produce an immediate escalation to the management body with a time-bound remediation plan. These are not backlog items - they represent the gaps most likely to produce supervisory consequences before the next annual submission cycle.

Common Gap Profiles by Entity Type

Gap patterns vary significantly by entity type. Knowing which profile most resembles your organisation helps you calibrate where to focus your assessment effort and where you're most likely to find the critical gaps.

🏦 Credit institutions and large investment firms

These entities typically have the most mature ICT risk management frameworks - often built on EBA outsourcing guidelines, ISO 27001, or NIST - so D1 and D7 scores tend to be higher. The most common gaps are in D5 (Register of Information) at the operational output level (xBRL-CSV submission and sub-outsourcing completeness), and in D4 around the Article 30(2) contract clause review for legacy contracts signed before DORA. Incident classification under DORA's RTS thresholds (D2) is frequently underdeveloped relative to the maturity of the broader ITSM programme.

💳 Payment institutions and e-money institutions

These entities often have strong operational awareness of ICT dependencies - they're in the business of real-time payment processing and understand downtime risk intimately. But their formal governance documentation (D7) frequently lags their operational reality, and their ICT risk management framework (D1) may not have been updated to reflect DORA's specific requirements. The Register of Information (D5) is often the most significant project-scale gap.

🛡️ Insurance undertakings

Insurance entities frequently have strong risk governance frameworks from Solvency II, which helps D1 and D7. The biggest gaps tend to be in domains specific to DORA's ICT focus: digital resilience testing (D3) is often limited to basic vulnerability assessments without the DORA-required scope definition; ICT third-party risk (D4) is managed through existing vendor governance but hasn't been assessed for DORA-specific requirements; and the Register of Information (D5) is frequently in a very early stage for smaller insurance entities.

🚀 Fintechs and newer financial entities

Fintech entities often have high technical sophistication - cloud-native architectures, modern security tooling, DevSecOps practices - but formal governance documentation is frequently absent or thin. D7 (governance) and D1 (documented framework) are the most common low scores. The ICT dependency picture is often complex (high cloud concentration, many API-based integrations) which makes D4 and D5 scope definition challenging. The good news: their sub-outsourcing data is often more readily available because cloud providers are generally more transparent about their infrastructure chains than legacy IT providers.

When to Reassess

A gap assessment is a point-in-time view. DORA is a continuous obligation. Run a full reassessment in the following circumstances:

- Annually, at minimum - ideally timed 4-5 months before your NCA submission deadline, giving enough time to act on what you find before the register needs to be finalised.

- Following a significant ICT incident - a major incident is both a test of your D2 maturity and a signal that your D1 framework may have gaps that weren't apparent on paper.

- After a material change in your ICT provider landscape - a new critical provider, an acquisition, or a significant contract renegotiation changes your D4 and D5 picture enough to warrant reassessment of those domains.

- When DORA technical standards are updated - the ITS and RTS have already been revised since initial publication. Each update potentially changes what "score 3" looks like in one or more domains.

- Before NCA supervisory visits or inspections - running a fresh assessment before a scheduled supervisory engagement gives you an accurate current picture and avoids the risk of presenting an outdated view of your readiness.

Know your DORA score before your NCA does.

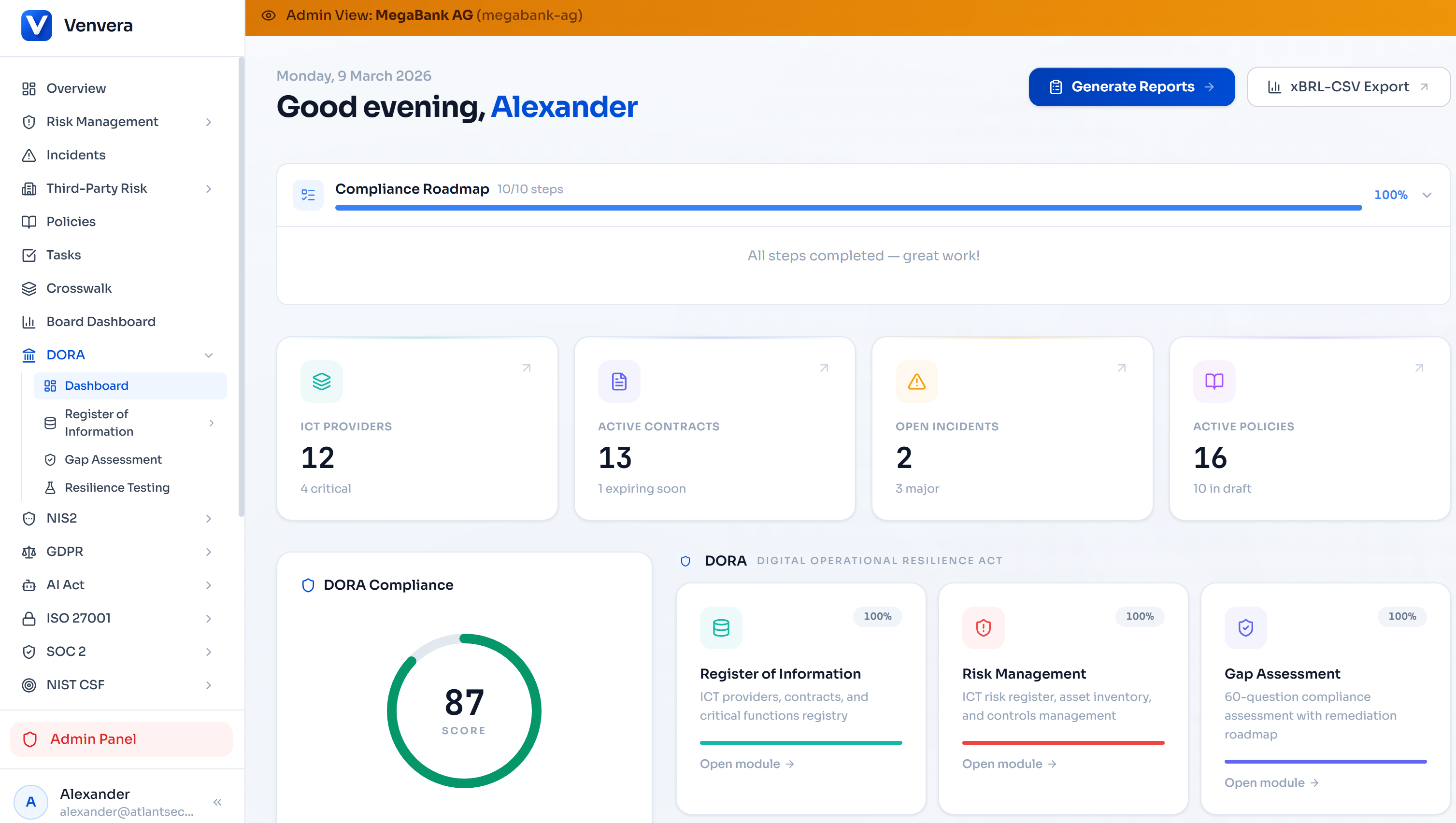

Venvera's built-in gap assessment module maps your readiness across all seven domains, tracks remediation progress, and connects directly to your Register of Information - so your gap data and your compliance data stay in sync year-round.

Run your gap assessment → Venvera.comFrequently Asked Questions

How long does a DORA gap assessment typically take?

For a standalone financial entity, a thorough gap assessment covering all seven domains takes between 4 and 8 weeks when done properly - including stakeholder interviews across ICT, compliance, legal, procurement, and the management body. For larger groups or entities with complex ICT landscapes, 10-12 weeks is more realistic. Assessments that run faster than 4 weeks usually mean evidence gathering has been superficial.

Should we use an external consultant for the DORA gap assessment?

External consultants bring objectivity and pattern recognition from working across multiple institutions. They're particularly valuable for D5 (Register of Information) where the technical xBRL requirements are specialised, and for D3 (testing) where TLPT applicability and tester selection involve regulatory nuance. However, an external assessment is only as good as the internal stakeholder access and evidence sharing that supports it. A hybrid approach - internal self-assessment validated by an external review - often produces the most actionable output.

Does a DORA gap assessment need to be shared with the NCA?

There is no mandatory requirement to submit a gap assessment to your NCA. However, NCAs may request evidence of your DORA compliance programme during supervisory visits, and a well-documented gap assessment with a remediation plan demonstrates proactive engagement with your obligations - which is generally viewed positively. Be cautious about gap assessments that document material failures without an accompanying remediation plan, as these can become supervisory findings if disclosed.

Can we use our existing ISO 27001 or NIST assessment as a DORA gap assessment?

Partially. Your ISO 27001 or NIST assessment gives you useful signal on D1 (ICT risk management framework) and D3 (testing), but it does not cover D5 (Register of Information), D4 (DORA-specific third-party requirements including Article 30(2)), D2 (DORA-specific incident classification and timelines), or D7 (management body accountability under Article 5). You would need to supplement any existing framework assessment with DORA-specific evaluation in these domains before you have a complete picture.

What score should we be targeting across all domains?

A score of 3 in every domain represents the regulatory baseline - you meet DORA's requirements as written. For Critical-weight domains (D5 and D2), targeting 4 is strongly advisable because the consequences of failure in these areas are immediate and visible to regulators. For Medium and Lower weight domains, reaching 3 and maintaining it is a reasonable target for most entities. The goal is not to achieve perfect scores everywhere - it is to ensure no high-weight domain is left below 2, and that any domain scoring 1 has an immediate remediation plan with board-level visibility.

Written by the Venvera compliance team. Venvera is a purpose-built DORA compliance platform for European financial entities. Last updated: February 2026.