January 17, 2025 was the date that was supposed to mark the end of the preparation period. DORA - the Digital Operational Resilience Act (Regulation (EU) 2022/2554) - became fully enforceable, and financial entities across the European Union were expected to meet its requirements in full. The message from regulators was clear: no transition period, no grace period, no excuses.

Over fourteen months later, the picture is sobering. The European Banking Authority (EBA), the European Central Bank (ECB), and national competent authorities (NCAs) have begun their first rounds of supervisory assessments. What they are finding confirms what many compliance professionals feared: most financial entities achieved surface-level compliance at best. Policies were adopted, governance structures were named, but the operational substance - the tooling, the data, the tested processes - was not ready.

Based on supervisory observations, industry surveys, and our direct work with dozens of EU financial entities, we have identified five structural compliance gaps that appear repeatedly. These are not edge cases. They affect the majority of institutions - from Tier 1 banks to mid-market investment firms. This article examines each gap in detail, explains why it persists, and offers a concrete path to remediation.

⚠️ Supervisory Context

The ECB’s 2025-2026 Supervisory Priorities explicitly name ICT risk and digital operational resilience as a key focus area. The EBA’s peer review on DORA implementation is scheduled for Q3 2026. NCAs across the EU-27 have already issued preliminary findings from on-site inspections. Institutions that have not addressed these gaps face regulatory action - not in theory, but on a defined supervisory timeline.

Gap 1 - Critical

Incomplete Register of Information

The Regulatory Requirement

DORA Article 28(3) requires financial entities to maintain a complete Register of Information (RoI) covering all contractual arrangements for the use of ICT services provided by third-party service providers. The Implementing Technical Standard (ITS) published by the ESAs in January 2024 specifies the exact data model: 15 interconnected templates with over 100 mandatory fields, covering the entity itself, ICT providers, contractual arrangements, sub-outsourcing chains, business functions supported, and risk assessments.

What supervisors are finding: Most institutions have built a register - but the data is incomplete, inconsistent, or structurally wrong. The most common deficiencies include:

- Missing sub-outsourcing chains. Article 28(3)(a) requires entities to identify not just their direct ICT providers but the entire sub-outsourcing chain for critical or important functions. Most banks have documented Tier 1 providers only. When a cloud provider sub-contracts data hosting or security monitoring, that chain is invisible in the register.

- Incorrect or missing entity identifiers. The ESA ITS mandates LEI codes (where available), entity types using ESA classification codes, and NCA identifiers for regulated entities. Many registers contain free-text company names without structured identifiers, rendering the data unsuitable for regulatory reporting.

- No xBRL-CSV export capability. The ESAs have mandated that the RoI be reportable in xBRL-CSV format - a structured, machine-readable format used by supervisory filing systems. Institutions that built their register in Excel or SharePoint cannot produce a valid regulatory filing.

- Business function mapping gaps. Each contractual arrangement must be linked to the business functions and critical or important functions it supports. Institutions frequently mapped ICT providers to departments rather than to specific business functions as defined by their own ICT risk management framework.

“The Register of Information is not a documentation exercise. It is the data infrastructure on which supervisory oversight of ICT third-party risk depends. Incomplete registers undermine the entire supervisory framework.”

- EBA Discussion Paper on DORA Implementation, 2025

Why it persists: The register is deceptively complex. Institutions treated it as a procurement exercise (list your vendors) rather than a data architecture exercise (model your entire ICT supply chain with regulatory-grade data). The ESA ITS data model requires relational integrity across 15 tables - something spreadsheets cannot enforce.

Gap 2 - High Risk

ICT Third-Party Concentration Risk Not Quantified

The Regulatory Requirement

DORA Articles 28-30 require financial entities to assess concentration risk arising from ICT third-party dependencies. Article 29(2) specifically mandates that entities identify and assess risks arising from sub-outsourcing and from situations where multiple critical functions depend on the same provider or a small group of providers. The entity must also consider substitutability - whether alternative providers exist and whether migration is feasible.

What supervisors are finding: Concentration risk analysis, where it exists at all, tends to be qualitative and superficial. Institutions acknowledge that they depend heavily on a small number of cloud providers (typically AWS, Microsoft Azure, and Google Cloud), but they have not performed the quantitative analysis DORA requires:

Single Points of Failure

Most banks cannot identify which critical business functions would be simultaneously affected if a single ICT provider experienced a major outage. The mapping between providers and business functions is incomplete.

Substitutability Assessment

Art. 29 requires analysis of whether alternative ICT providers exist. Few institutions have documented substitution feasibility, migration costs, or lock-in factors for their critical ICT service providers.

Cross-Entity Dependencies

Group-level concentration risk - where multiple entities within a financial group depend on the same provider - is almost never aggregated. Supervisors expect a consolidated group view.

Exit Strategy Gaps

DORA requires documented exit plans for critical ICT providers (Art. 28(8)). Most institutions have generic exit clauses in contracts but no operational exit strategy with timelines, costs, and testing results.

Why it persists: Concentration risk analysis requires data that spans procurement, IT architecture, business continuity, and risk management. In most institutions, this data lives in four different systems owned by four different departments. Without a unified ICT risk platform, the analysis is structurally impossible.

Gap 3 - Critical

Incident Classification Criteria Not Operationalized

The Regulatory Requirement

DORA Articles 17-20 establish a comprehensive ICT-related incident management framework. Article 18 mandates specific classification criteria - codified in the RTS on incident classification published by the ESAs - that financial entities must apply to determine whether an incident is “major” and therefore subject to mandatory NCA reporting. The classification is a multi-criteria test across seven dimensions: client impact, data integrity, criticality of affected services, economic impact, duration, geographical spread, and transaction impact.

What supervisors are finding: Institutions have incident management policies that reference DORA. They have updated their incident response procedures to mention NCA reporting. But the actual classification - the operational mechanism that determines, in real-time, whether an incident crosses the “major” threshold - is not embedded in workflows:

- Threshold definitions are vague. The RTS requires quantitative thresholds (e.g., percentage of clients affected, duration in hours, volume of failed transactions). Most institutions have defined thresholds qualitatively (“significant number of clients”) or have not calibrated thresholds at all.

- Reporting timelines are not automated. DORA mandates initial notification within 4 hours of classification, intermediate reports within 72 hours, and final reports within one month. These deadlines must trigger automatically once an incident is classified as major. In most banks, the clock management is manual - or non-existent.

- The classification decision is ad hoc. Rather than applying the seven-criteria test systematically, SOC analysts make subjective judgments about severity. This leads to under-reporting (avoiding regulatory scrutiny) or delayed reporting (because no one is sure whether the thresholds are met).

- Recurring incident aggregation is missing. Article 19(4) requires that recurring incidents which individually do not meet the major threshold but collectively constitute a significant pattern must be reported. Almost no institution has implemented aggregation logic.

“Having an incident response policy is not the same as having an operational incident classification capability. Supervisors will test the latter, not the former.”

- ECB Supervisory Newsletter, Observations on ICT Risk, 2025

Why it persists: Incident classification under DORA is fundamentally different from traditional ITIL-based severity models. The DORA classification is a regulatory act with legal consequences (mandatory reporting, supervisory follow-up, potential sanctions). Most incident management tools were built for IT operations, not regulatory compliance.

Gap 4 - Significant

Resilience Testing Programme Gaps

The Regulatory Requirement

DORA Articles 24-27 establish a mandatory digital operational resilience testing programme. Article 24 requires all financial entities to maintain a comprehensive testing programme, proportionate to their size, as part of their ICT risk management framework. For entities identified by NCAs as significant, Article 26 mandates Threat-Led Penetration Testing (TLPT) at least every three years, conducted in accordance with the TIBER-EU framework or equivalent national schemes.

What supervisors are finding: Annual testing programmes exist on paper - most institutions already had vulnerability scanning and penetration testing in place. But the DORA testing programme requirements go substantially beyond what was already done:

| Testing Requirement | Common Gap | Compliant Standard |

|---|---|---|

| Board approval of testing programme (Art. 24(2)) | Testing planned by IT/security team without board sign-off | Board formally approves scope, frequency, and methodology annually |

| TLPT scoping and execution (Art. 26) | No TLPT programme, or TLPT scope does not cover all critical functions | TLPT every 3 years per TIBER-EU, scope covers all identified critical functions |

| Scenario-based testing (Art. 24(6)) | Vulnerability scans only; no scenario-based or stress testing | Scenario tests covering ICT provider failure, cyber attack, data loss scenarios |

| Findings remediation tracking (Art. 24(5)) | Findings tracked in spreadsheets; no formal remediation timeline or escalation | All findings tracked with remediation owner, timeline, re-testing, and board reporting |

| Third-party tester independence (Art. 26(8)) | Same firm conducts audit and TLPT; independence not verified | Independent external testers with documented qualifications and no conflicts of interest |

| Coverage of ICT providers (Art. 24(4)) | Testing programme covers internal systems only; third-party services excluded | Testing encompasses ICT services provided by third parties supporting critical functions |

Why it persists: The disconnect is between security testing (a technical exercise managed by CISOs) and resilience testing under DORA (a governance exercise overseen by the management body). DORA requires that the testing programme be integrated into the ICT risk management framework, approved by the board, and its results feed back into risk assessments. Most institutions have never connected these processes.

Gap 5 - Pervasive

Contractual Arrangements Missing Art. 30 Mandatory Clauses

The Regulatory Requirement

DORA Article 30 specifies a comprehensive list of mandatory contractual clauses that must be included in all agreements with ICT third-party service providers. For providers supporting critical or important functions, the requirements expand further. The article mandates at minimum 14 distinct contractual provisions - and for critical function providers, an additional set of enhanced requirements covering audit rights, exit strategies, sub-outsourcing controls, and data location commitments.

The 14+ mandatory clauses that must appear in ICT contracts under Art. 30:

1. Clear description of all ICT services (Art. 30(2)(a))

2. Data processing locations and storage (Art. 30(2)(b))

3. SLAs with quantitative service levels (Art. 30(2)(c))

4. Incident reporting obligations (Art. 30(2)(d))

5. Business continuity provisions (Art. 30(2)(e))

6. Termination rights and notice periods (Art. 30(2)(f))

7. Data access, return and deletion (Art. 30(2)(g))

8. Cooperation with competent authorities (Art. 30(2)(h))

9. Unrestricted audit and access rights (Art. 30(3)(a))

10. Sub-outsourcing conditions and approval (Art. 30(3)(b))

11. Comprehensive exit strategies (Art. 30(3)(d))

12. Participation in resilience testing (Art. 30(3)(e))

13. Data security measures and encryption (Art. 30(2)(i))

14. Transition and migration assistance (Art. 30(3)(f))

What supervisors are finding: The gap is massive. Most existing ICT contracts pre-date DORA and were negotiated under pre-DORA regulatory frameworks (EBA Outsourcing Guidelines, local NCA requirements). Contract remediation programmes - the systematic process of amending existing contracts to include Art. 30 clauses - are either not started or moving too slowly:

- Large hyperscaler contracts are the hardest. Institutions report that AWS, Microsoft, and Google have published “DORA addenda” that partially address Art. 30, but these standardized documents often do not fully meet the regulation’s requirements - particularly regarding unrestricted audit rights and sub-outsourcing approval.

- No clause-by-clause tracking. Most institutions do not have a systematic way to track which of the 14+ mandatory clauses are present in each contract. The assessment is done manually, contract by contract, by legal teams - with no structured data output.

- Renewal timelines misaligned. Many ICT contracts have multi-year terms. Institutions are waiting for contract renewals to introduce DORA clauses, leaving them non-compliant until renewal dates that may be 2-3 years away.

Why it persists: Contract remediation at scale is a massive legal and procurement exercise. A mid-size bank may have 200-500 ICT provider contracts. Reviewing each contract against 14+ mandatory clauses, negotiating amendments with providers who may resist (especially regarding audit rights and sub-outsourcing controls), and tracking the remediation programme requires purpose-built tooling - not a legal team and a spreadsheet.

Self-Assessment

DORA Readiness Assessment Checklist

Use this table to benchmark your institution against the five critical gap areas. Be honest - supervisors will be.

| Gap Area | ✓ Compliant Looks Like | ✗ Non-Compliant Looks Like |

|---|---|---|

| Register of Information | Complete RoI with all 15 ESA templates populated, sub-outsourcing chains mapped, LEI codes validated, xBRL-CSV export tested and filed | Excel-based vendor list with company names only, no sub-outsourcing visibility, no regulatory export capability |

| Concentration Risk | Quantified dependency analysis per critical provider, documented substitutability assessment, exit strategies tested, board-reported dashboard | Qualitative statement that “we depend on AWS” without quantified impact analysis, no exit plans, no substitutability assessment |

| Incident Classification | Art. 18 seven-criteria test embedded in SOC workflow, quantitative thresholds calibrated, automated reporting timelines (4h/72h/1mo), recurring incident aggregation | Updated incident policy referencing DORA but no operational classification mechanism; analysts make subjective severity calls |

| Resilience Testing | Board-approved testing programme, TLPT scoped per TIBER-EU, scenario-based tests executed, findings formally tracked with remediation timelines and re-testing | Annual pen test and vulnerability scans only, no board approval, no TLPT programme, findings in PDF reports without tracking |

| Art. 30 Contracts | All ICT contracts reviewed against 14+ mandatory clauses, remediation programme with timelines, clause-by-clause tracking per contract, negotiation status dashboard | Contracts pre-date DORA, no systematic clause review, waiting for renewal cycles, hyperscaler addenda accepted without gap analysis |

⚠️ Scoring Your Assessment

If your institution falls on the “non-compliant” side for 3 or more of these areas, you are at material risk of supervisory findings. The ECB’s on-site inspection methodology for ICT risk includes all five areas, and NCAs are conducting targeted reviews specifically focused on the Register of Information and incident classification readiness.

Solution

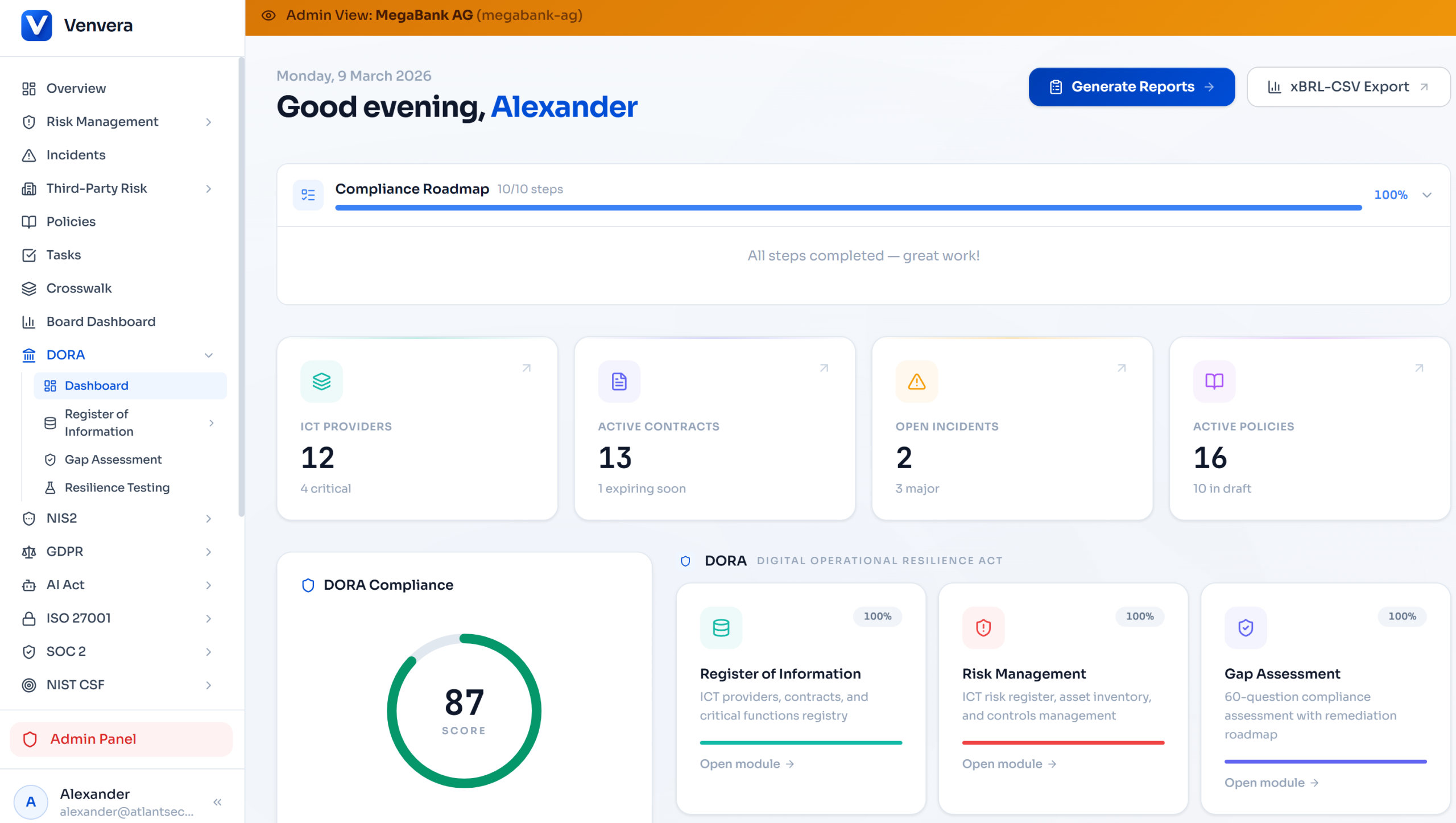

How Venvera’s Gap Assessment Tool Closes These Gaps

These five gaps are not random. They share a common root cause: institutions are managing DORA compliance in disconnected spreadsheets, documents, and generic GRC tools that were not built for DORA’s specific requirements. Venvera was purpose-built to address exactly this problem.

Automated Gap Scoring

Venvera’s gap assessment engine evaluates your institution across all five DORA pillars: ICT risk management (Art. 5-16), incident management (Art. 17-20), resilience testing (Art. 24-27), third-party risk (Art. 28-30), and information sharing (Art. 45). Each pillar receives a compliance score based on data completeness, process maturity, and evidence quality.

Board-Ready Reporting

Generate executive summaries that translate technical compliance gaps into business risk language your management body understands. Pillar-level dashboards, trend lines showing progress over time, and comparison against regulatory benchmarks - all exportable to PDF for board packs.

Register of Information Module

A fully structured RoI that enforces the ESA ITS data model. All 15 templates built in, LEI validation, ESA entity code lookups, sub-outsourcing chain mapping, and one-click xBRL-CSV export. No more spreadsheet gymnastics - the data model enforces completeness.

Remediation Tracking

Every identified gap becomes a trackable remediation item with an owner, timeline, priority level, and linked evidence. Progress is measured automatically as data is entered and processes are documented. No gap gets lost in an email thread.

Contract Clause Tracker

Track Art. 30 clause coverage per contract. Each of the 14+ mandatory clauses can be marked as present, missing, or partially addressed, with links to the relevant contract section. Dashboard view shows overall remediation progress across your entire contract portfolio.

Incident Classification Engine

DORA-specific incident classification with the Art. 18 seven-criteria test built into the workflow. Quantitative thresholds are configurable, reporting deadlines are automatically calculated, and NCA notification templates are pre-formatted. Recurring incident aggregation is automated.

Why Purpose-Built Matters

Generic GRC platforms treat DORA as a checklist of controls. Venvera treats DORA as a data architecture problem - because that’s what it is. The Register of Information is a relational database. Concentration risk requires graph analysis of provider dependencies. Incident classification requires a rules engine. Contract compliance requires clause-level tracking. These are engineering problems, not checklist problems.

Timeline

The Supervisory Clock Is Ticking

Understanding the supervisory calendar is critical for prioritizing your remediation efforts. Here are the key milestones that should drive your gap closure timeline:

| Date | Supervisory Milestone | What It Means for You |

|---|---|---|

| Jan 17, 2025 | DORA enforcement date | Full compliance required from this date. No transition period. |

| Apr 30, 2025 | First RoI filing deadline (most NCAs) | Register of Information submitted to NCA. Data quality issues flagged. |

| H2 2025-H1 2026 | First wave of on-site inspections (ECB, NCAs) | Targeted reviews of ICT risk management frameworks, incident response capabilities, and testing programmes. |

| Q3 2026 | EBA Peer Review on DORA implementation | Cross-jurisdictional assessment. NCAs under pressure to demonstrate enforcement. Expect increased scrutiny. |

| 2025-2028 | CTPP designation and oversight framework | Lead Overseers designated for critical ICT providers. Direct implications for your concentration risk analysis and exit planning. |

“DORA is not a regulation that financial entities can comply with on paper and hope supervisors won’t notice the gaps in practice. The supervisory methodology is designed to test operational substance, not documentary form. Entities that have policies but not capabilities will be found non-compliant.”

- Analysis based on ECB Banking Supervision priorities and EBA guidelines

About This Article

This analysis is based on publicly available supervisory publications from the ECB, EBA, and national competent authorities, combined with Venvera’s direct experience supporting EU financial entities with DORA compliance. The five gap areas identified reflect patterns observed across multiple jurisdictions and institution types.

Disclaimer: This article is for informational purposes only and does not constitute legal or regulatory advice. Financial entities should consult with qualified legal and compliance professionals regarding their specific DORA obligations. Regulatory interpretations may vary by jurisdiction and entity type. References to supervisory publications are based on publicly available documents as of March 2026.

© 2026 Venvera. All rights reserved.