📋 What this article covers: How the EU AI Act's extraterritorial scope works, which non-EU companies are caught and why, how "output used in the EU" is interpreted in practice, what the authorised representative requirement means, scenario-by-scenario analysis for US, UK, Canadian, and other non-EU companies, and what you actually need to do if you are in scope from outside the EU.

A software company in San Francisco selling an AI-powered hiring tool to European enterprises. A fintech in Singapore running credit decisions for EU customers. A UK health tech firm whose AI triage tool is used in Irish hospitals. A Canadian legaltech company with EU law firm subscribers. None of these companies are based in the EU. All of them are in scope of the EU AI Act.

The extraterritorial reach of EU regulation has become one of the defining features of the digital regulatory landscape. The GDPR established the template: if you process data about EU residents, you are subject to EU law regardless of where you are based. The EU AI Act follows the same logic, and then extends it further in ways that catch companies who genuinely believe they are outside its reach.

This article gives you a precise, practical answer to whether the EU AI Act applies to your company - not a general principle, but scenario-by-scenario analysis covering the situations that actually generate confusion. If you are a non-EU company with any exposure to EU markets, customers, or users, you need to know where you stand before the major compliance deadlines arrive.

📌 Jump to section

How the Extraterritorial Scope Actually Works

Article 2 of the EU AI Act sets out its scope. The key provisions for non-EU companies are found in Article 2(1)(c) and 2(1)(d), which extend the Act's application beyond EU-established entities in two specific ways.

First, the Act applies to providers that place an AI system on the EU market or put an AI system into service in the EU - regardless of whether those providers are established in the EU. This is the clean, bright-line rule: if you sell or make available an AI system to EU customers or organisations, you are a provider under the Act and you carry provider obligations.

Second - and this is where many non-EU companies are surprised - the Act also applies to providers and deployers established outside the EU when the output of their AI system is used in the EU. This catches situations where the AI system is not marketed to EU customers but its outputs reach EU persons anyway: a US company whose AI makes decisions about EU employees, a non-EU algorithmic pricing system used in EU markets, a foreign government AI system whose outputs affect EU citizens.

What the Act does not do is create different tiers of obligation based on where a company is established. A US company selling a high-risk AI system to an EU hospital has exactly the same provider obligations as an EU company doing the same thing. There is no lighter regime for being based outside the EU. The only difference is the additional requirement - for non-EU providers of high-risk AI - to appoint an authorised representative established within the EU.

The Key Trigger: "Output Used in the EU"

The "output used in the EU" trigger is the most expansive and the least understood part of the extraterritorial scope. It needs unpacking carefully because it catches companies who have never deliberately targeted EU markets.

The Act does not define "output used in the EU" exhaustively, but the legislative intent - and the recitals - make clear that it is designed to prevent regulatory arbitrage through relocation. If a company establishes its AI operations in a non-EU jurisdiction specifically to avoid EU AI Act obligations while still serving EU users or affecting EU persons, the Act applies regardless.

In practice, the trigger activates when any of the following applies.

The AI system produces outputs - decisions, recommendations, content, predictions - that directly affect EU persons

A non-EU company's AI model that determines loan eligibility for EU applicants produces outputs used in the EU. A non-EU HR AI system that scores CVs from EU job applicants produces outputs used in the EU. The person affected is in the EU; the output therefore is used in the EU.

An EU-established organisation deploys or uses the non-EU company's AI system

A US AI vendor whose tool is deployed by an EU financial institution is placing the system into service in the EU. The EU customer's use of the tool is the use in the EU that triggers the non-EU vendor's provider obligations.

The AI system processes data about EU persons and the results feed into decisions affecting those persons

A non-EU company running an AI model to analyse EU consumer behaviour for targeted advertising, pricing, or content moderation produces outputs that are used in the EU - even if the company itself never markets its AI to EU customers as a standalone product.

Where the trigger does not activate: a non-EU company that uses AI exclusively for internal operations with no EU market activity, no EU customers, no EU employees affected by AI decisions, and no AI outputs reaching any person in the EU. That company is genuinely outside scope - but the bar for being genuinely outside scope is higher than most companies assume when they first assess their position.

Scenario-by-Scenario Analysis

The abstract scope rules become clearer through concrete scenarios. The following covers the situations that generate the most genuine uncertainty for non-EU companies assessing their position.

| Scenario | In scope? | Role and obligations |

|---|---|---|

| US SaaS company selling AI-powered recruitment software to EU employers | ✅ Yes | Provider of a high-risk AI system (Annex III, employment). Must meet all high-risk provider obligations. Must appoint an EU authorised representative. |

| Canadian fintech running AI credit decisions for EU customers, with no EU legal entity | ✅ Yes | Provider placing high-risk AI (Annex III, essential financial services) on the EU market. Output used in the EU. Must appoint an EU authorised representative. |

| Indian IT services company providing AI-powered document processing to EU clients under a managed services contract | ✅ Yes | Provider putting AI into service in the EU through the managed services arrangement. Obligations depend on whether the AI is used for high-risk purposes by the EU client. |

| US company releasing an open-source LLM on GitHub, accessible globally | ✅ Yes (partially) | Provider of a GPAI model placed on the EU market. GPAI chapter obligations apply from August 2025. Some open-source exemptions apply but do not remove all obligations - particularly for models above the systemic risk compute threshold. |

| Australian insurer using AI to underwrite policies for EU policyholders | ✅ Yes | Provider whose AI output is used in the EU. High-risk classification likely applies (essential services). Authorised representative required. |

| US company using AI exclusively for internal US operations - no EU customers, no EU employees, no EU market activity | ❌ No | No EU nexus. AI outputs do not reach EU persons. Genuinely out of scope - but if EU operations begin, scope must be reassessed immediately. |

| Non-EU academic institution using AI for pure research not placed on the market | ❌ No | Research and development exemption applies. Scope applies when the system is commercialised or deployed outside the research context. |

| Non-EU company building AI that is integrated into another company's product before reaching the EU market | ⚠️ Depends | If the integrating company substantially modifies the AI, the integrator becomes the provider. If they integrate it as-is and the original AI retains its characteristics, the original developer may remain the provider. Requires case-by-case analysis of the integration and any modifications. |

| Non-EU social media company using AI for content recommendation shown to EU users | ✅ Yes | AI output used in the EU. Risk classification depends on the specific application - general recommendation systems are typically minimal-risk, but AI used for targeted content that exploits vulnerabilities may cross into prohibited territory. Transparency obligations under Digital Services Act may interact. |

By Country: US, UK, Canada, and Others

The EU AI Act treats all non-EU countries identically for scope purposes. There is no EU-equivalent treaty arrangement, no adequacy decision, and no preferential treatment for any particular non-EU jurisdiction. However, the practical implications vary by country because of differences in existing regulatory frameworks and the maturity of mutual recognition discussions.

🇺🇸 United States

The US has no equivalent federal AI regulation at the same level of comprehensiveness as the EU AI Act. US companies operating in EU markets are fully subject to the EU Act with no domestic equivalent to align against. This creates a dual-track compliance burden for US AI companies with EU customers: US-specific state-level AI regulations on one side, EU AI Act on the other, with limited overlap in their requirements. US companies should not assume that NIST AI RMF compliance, state AI bias laws, or compliance with the Executive Order on AI provides any credit toward EU AI Act obligations - the frameworks are substantially different in structure and requirements.

🇬🇧 United Kingdom

The UK has explicitly chosen a different regulatory approach - a principles-based, sector-led AI framework rather than a comprehensive horizontal regulation. This means there is no UK equivalent of the EU AI Act and no prospect of mutual recognition in the short term. UK companies with EU customers or EU market presence are in exactly the same position as US companies: fully subject to EU AI Act obligations when they meet the scope criteria, with no domestic framework that maps onto EU requirements. UK companies already complying with ICO guidance on AI and data protection, or with FCA expectations around algorithmic models, should treat this as useful groundwork but not as EU AI Act compliance.

🇨🇦 Canada

Canada is developing its own AI regulation through the Artificial Intelligence and Data Act (AIDA), though the timeline for AIDA's entry into force remains uncertain. Canadian companies with EU exposure face the EU AI Act's full scope requirements independently of whatever AIDA ultimately requires. The frameworks share some conceptual overlap - high-risk classification, transparency, human oversight - but their specific requirements differ enough that AIDA compliance would not constitute EU AI Act compliance even if AIDA were fully in force.

🇨🇭 Switzerland

Switzerland is not an EU member but has extensive EU market integration through bilateral agreements. Swiss companies placing AI on the EU market or into service in EU member states are subject to the EU AI Act under its standard extraterritorial provisions. Switzerland's approach to AI regulation is still developing and does not currently provide a passporting equivalent for EU AI Act compliance.

All other non-EU countries

The analysis is identical. Establishment in Japan, South Korea, Brazil, India, Singapore, Australia, or any other non-EU country does not create any preferential scope treatment. The EU AI Act applies whenever the scope conditions are met - EU market access or EU-directed AI output - regardless of the company's home jurisdiction. Whether or not a company's home country has its own AI regulation is irrelevant to EU AI Act scope.

The Authorised Representative Requirement

Non-EU providers of high-risk AI systems must, before placing their systems on the EU market or putting them into service in the EU, appoint an authorised representative established in the EU. This requirement is set out in Article 22 and mirrors the equivalent requirement under the GDPR (Article 27) for non-EU data controllers without EU establishments.

The authorised representative is not merely a postal address. Their role carries substantive legal responsibility. Specifically, the authorised representative must be empowered to act on behalf of the non-EU provider in dealings with national competent authorities, must be named in the technical documentation and declarations of conformity, and can be held liable by EU authorities for the provider's compliance obligations when the provider itself is unreachable or non-cooperative.

What the authorised representative must do

| Obligation | Detail |

|---|---|

| Verify documentation | Ensure the EU declaration of conformity and technical documentation have been drawn up and are available to authorities on request |

| Cooperate with authorities | Act as the contact point for national competent authorities; provide information and documentation on request; facilitate access to the provider if needed |

| Register in the EU AI database | For high-risk AI systems requiring registration, ensure the AI system and the provider's details are correctly registered in the EU-wide AI database |

| Handle serious incidents | Where required, notify authorities of serious incidents or malfunctions; be the escalation point for safety concerns raised by EU deployers or users |

| Maintain a mandate | Hold a written mandate from the non-EU provider authorising them to act; this mandate must specify the scope of authority and be available to authorities on request |

Who qualifies as an authorised representative?

Any legal or natural person established in an EU member state can serve as an authorised representative - a law firm, a compliance consultancy, an EU subsidiary of the non-EU company, or a dedicated regulatory representation service. If the non-EU company has an EU subsidiary or affiliate, that entity can take on the role, which is often the simplest structural solution. Third-party representation services are emerging, similar to those that developed in the GDPR market, though the AI Act's requirements are more substantive and the representative's liability exposure is higher.

What Non-EU Companies in Scope Actually Need to Do

If you have determined that the EU AI Act applies to your company, the obligations that follow depend on your role in the AI value chain and the risk classification of your AI systems. The following maps out the core action areas for the most common non-EU company profiles.

If you are a non-EU provider of high-risk AI systems (August 2026 deadline)

- Appoint an EU authorised representative - and do it well before your compliance deadline, because the representative needs time to prepare the documentation they will be responsible for.

- Build a risk management system for each high-risk AI system, as required by Article 9 - a continuous, iterative process covering the full lifecycle of the system.

- Prepare technical documentation under Article 11 and Annex IV - a detailed, maintained record of the system's design, development, testing, and performance characteristics.

- Implement human oversight measures under Article 14 - the system must be designed to allow EU deployers to understand, monitor, and where necessary override its outputs.

- Conduct a conformity assessment appropriate to your system type - either a self-assessment or third-party assessment depending on whether your system falls under a regulated product sector.

- Register the system in the EU AI database before it is placed on the EU market or put into service.

- Produce a declaration of conformity and affix the CE marking where required.

- Update EU customer contracts to include the required information for deployers - including instructions for use, intended purpose limitations, and information needed for the deployer to carry out their own obligations.

If you are a non-EU provider of a GPAI model (August 2025 deadline)

- Prepare and maintain technical documentation - covering training methodology, training data, evaluation results, and known limitations.

- Provide information to downstream providers who build on your model - including a model card or equivalent that allows them to assess the model's capabilities and risks for their specific use cases.

- Implement a copyright compliance policy - evidence that your training data sourcing and processing respects EU copyright law, including for opt-out requests under the text and data mining exception.

- If your model is systemic risk (above 10²⁵ FLOPs of training compute): adversarial testing, incident reporting to the EU AI Office, and enhanced cybersecurity obligations apply in addition to the above.

If you are a non-EU deployer of high-risk AI with EU operations

- Verify that the AI system you are deploying is compliant - request documentation from the provider and confirm that required conformity assessments have been conducted.

- Use the AI system only for its intended purpose as documented by the provider - using a system outside its intended scope can transfer provider obligations to you.

- Implement human oversight appropriate to the specific use case and ensure staff responsible for AI-assisted decisions are trained in the system's operation and limitations.

- Monitor performance on an ongoing basis and report malfunctions or risks to the provider and, where required, to national competent authorities.

- Inform and protect affected persons where required - including informing employees when AI is used in decisions about them, and providing the transparency notices required for certain AI applications.

When You Are Genuinely Out of Scope

Given how broad the extraterritorial reach is, it is worth being precise about the conditions under which a non-EU company is genuinely outside scope. All of the following must be true simultaneously.

✅ Conditions for being genuinely out of scope

If any of these conditions is not met - or may not be met in the future as you grow - you should treat yourself as in scope and take compliance steps accordingly. The cost of incorrectly self-assessing as out of scope and later being found in breach is substantially higher than the cost of building compliance infrastructure proactively.

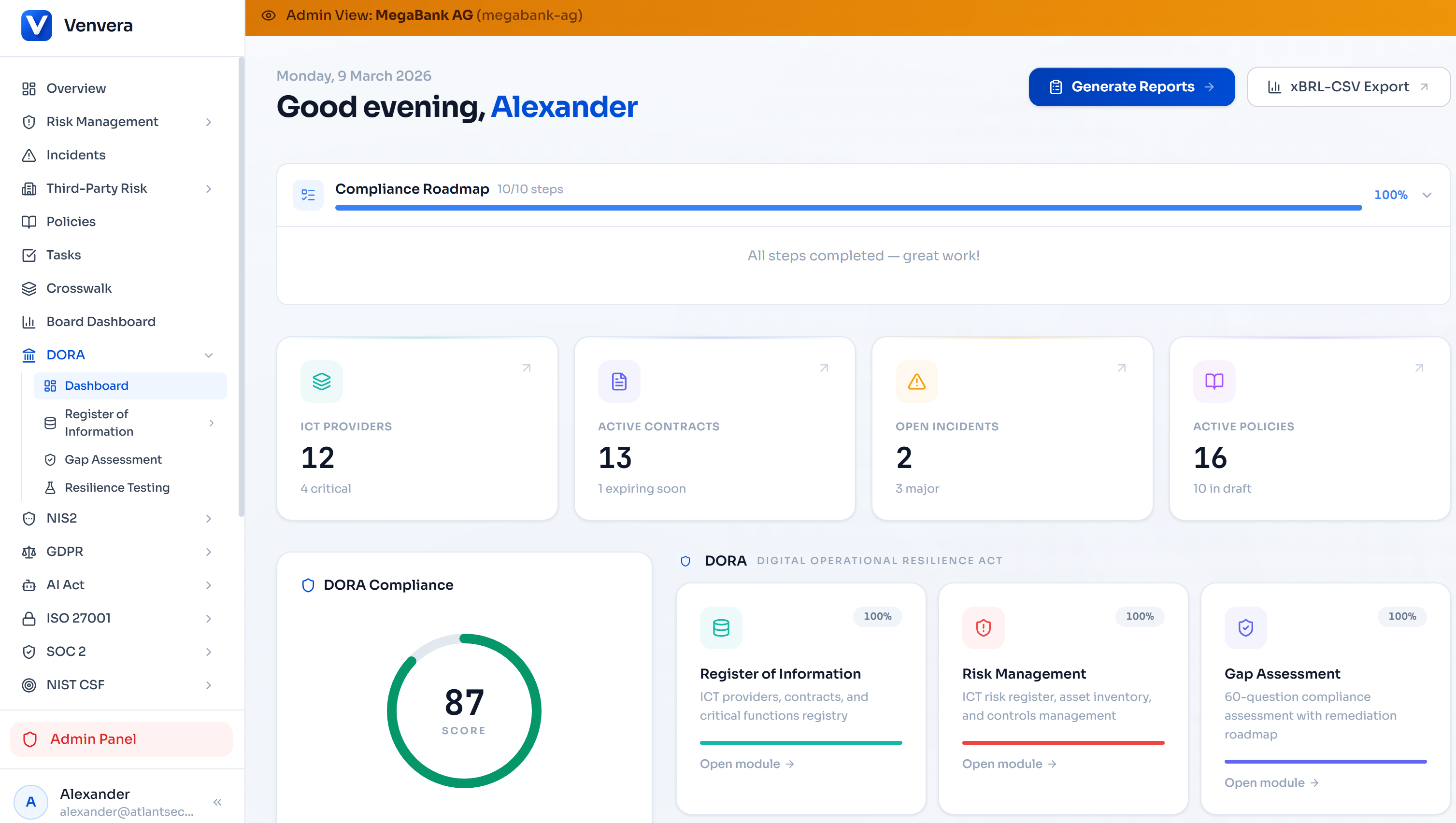

EU compliance built for European financial entities.

Venvera is purpose-built for EU-regulated financial institutions managing overlapping obligations across DORA, the EU AI Act, and sector-specific requirements - with EU data residency as standard and no data leaving the EU.

Learn more → Venvera.comFrequently Asked Questions

Does the EU AI Act apply to us if we only have one EU customer?

Scope under the EU AI Act is not conditional on the number of EU customers. If you place an AI system on the EU market - including by making it available to a single EU customer - you are in scope as a provider for that system. The risk classification of the AI system and the nature of your obligations is what varies, not whether the Act applies at all.

We are a US company but we have an EU subsidiary - which entity has the obligations?

If the EU subsidiary places the AI system on the EU market under its own name or as the responsible entity, the EU subsidiary is the provider and carries the full provider obligations. If the US parent develops and places the system on the market while the EU subsidiary only distributes or deploys it, the US parent is the provider and the EU subsidiary may be the deployer or distributor. The entity that controls the AI system and determines how and where it is placed on the market is the provider. In practice, many groups find that routing obligations through the EU subsidiary is the cleanest structure, as it removes the need for a separate authorised representative appointment.

Is the EU AI Act's extraterritorial reach actually enforceable against non-EU companies?

The GDPR's extraterritorial enforcement provides the relevant precedent. In practice, non-EU companies with no EU assets or physical presence are harder to fine directly, but EU authorities have tools including market access restrictions - banning non-compliant AI systems from the EU market - which are highly effective against any company that values EU revenue. The authorised representative requirement also creates a EU-based enforcement hook. Companies that routinely ignore EU regulatory requirements find themselves effectively excluded from EU market access, which is often a more commercially significant consequence than the fine itself.

Does the Brexit mean UK companies get any different treatment?

No. Since Brexit, the UK is treated as a third country under EU law. UK companies placing AI on the EU market or with AI outputs used in the EU are subject to the EU AI Act on exactly the same basis as a US, Canadian, or Australian company. There is no mutual recognition arrangement for AI regulation between the EU and UK, and none is imminent. UK companies need EU AI Act compliance entirely separately from their domestic UK AI obligations.

Do we need an authorised representative if we only sell to business customers, not consumers?

The authorised representative requirement for non-EU providers applies to high-risk AI systems placed on the EU market regardless of whether the customers are businesses or consumers. B2B AI sales to EU enterprises trigger the same requirement as consumer-facing AI products, if the AI system falls into the high-risk category. The distinction between B2B and B2C is irrelevant to scope and the authorised representative obligation.

Written by the Venvera compliance team. This article reflects the EU AI Act as enacted and interpretation as of February 2026. It does not constitute legal advice. Last updated: February 2026.