What this article covers: The specific tools available for EU AI Act compliance, what each one actually does well and badly, head-to-head comparison tables for classification, documentation, and governance capabilities, which tools fit which type of organisation, pricing expectations, and why most general GRC platforms fall short for AI Act-specific work.

Last year I spent three months helping a Swedish fintech evaluate compliance tooling for their EU AI Act programme. They had shortlisted seven products, run demos on five, and received proposals from four. By the end of the process they were more confused than when they started. Each vendor was using different terminology, claiming to cover different parts of the regulation, and making apples-to-apples comparison nearly impossible.

The problem was structural. The EU AI Act compliance software market is a mix of purpose-built AI governance tools, general GRC platforms that have added an "AI Act module," MLOps platforms that have bolted on compliance features, and a handful of sector-specific platforms. All of them claim to support EU AI Act compliance. Most of them do — for some parts of what the regulation requires, and not others.

This article names tools, compares them honestly against the specific requirements of the regulation, and gives you the information you need to decide which one — or which combination — fits your situation.

Jump to section

- What EU AI Act compliance actually needs from software

- Purpose-built AI governance platforms — Credo AI, Holistic AI, Arthur AI

- General GRC platforms with AI Act modules — compared

- MLOps platforms with compliance features — compared

- Master comparison table across all tools

- Best tool by organisation type

- Financial entities: DORA + EU AI Act together

- Questions to ask vendors before you buy

What EU AI Act Compliance Actually Needs from Software

The EU AI Act creates five distinct compliance workstreams. Tools vary dramatically in how many of them they genuinely address versus nominally cover. Before evaluating any platform, know which of these five tasks you need it to handle.

| Compliance task | What software genuinely helps with | What requires human expertise regardless |

|---|---|---|

| AI inventory & classification | Guided Annex III questionnaires, classification rationale capture, risk tier dashboards | Boundary case judgements; discovering shadow AI embedded in SaaS tools |

| Technical documentation | Annex IV-structured fields, completeness validation, lifecycle versioning | Content — software structures the document, it does not write it |

| Risk management & conformity | Risk register, control mapping, conformity assessment workflow, Declaration of Conformity generation | Risk identification requires domain expertise in the system's operational context |

| Human oversight governance | Policy storage, training tracking, override log management | Designing oversight workflows that are genuinely meaningful, not just formally documented |

| Post-market monitoring | Performance dashboards, drift detection, incident logging, regulatory alert feeds | Determining whether a performance change constitutes a reportable serious incident |

Purpose-Built AI Governance Platforms

These tools were built specifically for AI risk and governance. They have the most mature support for classification and documentation, and the deepest understanding of the EU AI Act's specific structure. The main players as of early 2026 are Credo AI, Holistic AI, and Arthur AI.

General GRC Platforms with AI Act Modules — Compared

The major GRC platforms have all added EU AI Act content. They work better as an existing investment to extend than as a first-choice tool for AI Act compliance. If you are deeply embedded in ServiceNow or OneTrust already, there is value in adding their AI Act module rather than introducing a new platform. If choosing from scratch, the purpose-built tools are almost always better for the AI Act-specific workstreams.

| Platform | AI Act module? | Annex III classification logic | Annex IV structured docs | Deployer workflows | Best use case |

|---|---|---|---|---|---|

| ServiceNow GRC | Yes | Manual config | Doc storage only | Generic | Large enterprises already on ServiceNow; consolidation play |

| OneTrust | Yes | Limited | No | Weak | Existing OneTrust GDPR users adding AI Act alongside privacy compliance |

| Vanta | Partial | No | No | No | SOC 2 / ISO 27001 only — do not use for AI Act-specific work |

| Drata | Partial | No | No | No | Same as Vanta — security framework compliance automation, not AI regulation |

| MetricStream | Yes | Manual config | Template-based | Generic workflows | Large financial institutions with existing MetricStream GRC programmes |

MLOps Platforms with Compliance Features — Compared

MLOps platforms sit inside the AI development workflow. Their strength is proximity to actual model data — bias metrics, performance statistics, and experiment logs come from real systems rather than manual entry. Their weakness is that they are engineering tools, not compliance governance tools. They handle post-market monitoring and partial documentation well. They handle classification, human oversight governance, and conformity assessment not at all.

| Platform | Model cards | Bias monitoring | Drift detection | AI Act classification | Compliance governance | Approx cost |

|---|---|---|---|---|---|---|

| Weights & Biases | Strong | Basic | Yes | No | No | From ~€3k/yr |

| MLflow (OSS) | Yes | No | Via plugins | No | No | Free / hosting costs |

| Dataiku | Yes | Good | Good | Partial | Basic | €25–90k/yr |

| IBM OpenScale | Strong | Strong | Strong | Partial | IBM ecosystem | IBM contract-based |

Master Comparison — All Tools Across All Five Requirements

This is the table to bring to your evaluation process. Ratings reflect native capability: Yes = purpose-built functionality, Partial = works but requires significant configuration or is limited in scope, No = not supported.

| Tool | AI inventory & classification | Technical documentation | Risk mgmt & conformity | Human oversight governance | Post-market monitoring | DORA integration | EU data residency | Approx. annual cost |

|---|---|---|---|---|---|---|---|---|

| Credo AI | Yes | Yes | Yes | Partial | No | No | On request | €40–120k |

| Holistic AI | Yes | Partial | Yes | Yes | Partial | No | Default | €20–80k |

| Arthur AI | No | No | No | No | Yes | No | No | €30k+ |

| ServiceNow GRC | Partial | No | Partial | Partial | Partial | No | Partial | Add-on to licence |

| OneTrust | Partial | No | Partial | No | No | No | Partial | €15–50k add-on |

| Vanta / Drata | No | No | No | No | No | No | No | €10–30k |

| Dataiku | Partial | Partial | No | No | Yes | No | Partial | €25–90k |

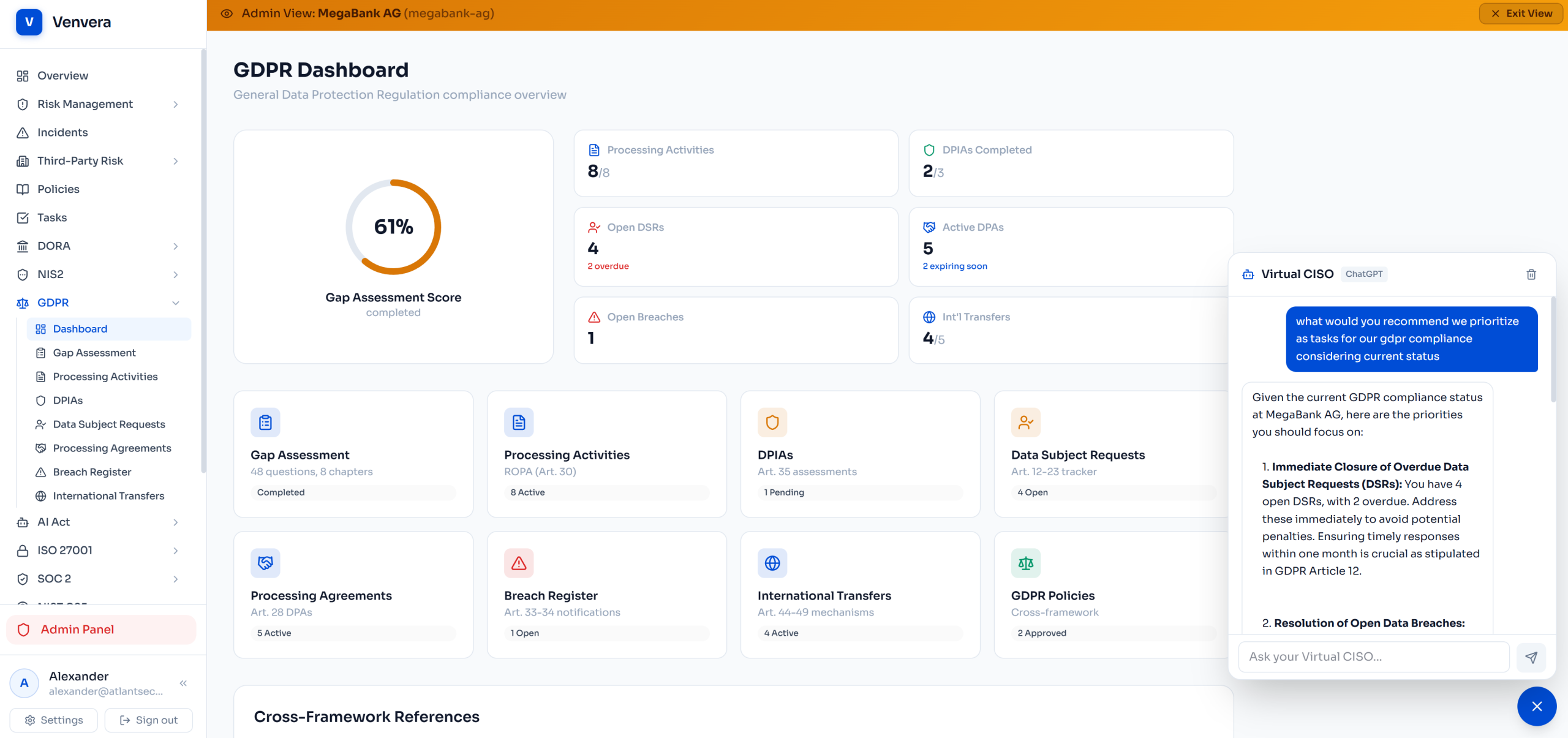

| Venvera | Yes | Yes | Yes | Yes | Partial | Yes | Default | Contact |

Best Tool by Organisation Type

| Organisation type | Primary recommendation | Add for monitoring | Avoid |

|---|---|---|---|

| AI software company (provider) | Credo AI | Arthur AI or Dataiku | Vanta, Drata |

| EU financial entity (DORA + AI Act) | Venvera | IBM OpenScale if building in-house AI | General GRC platforms lacking DORA context |

| Mid-market EU company (primarily deployer) | Holistic AI | Weights & Biases if building internal AI | Credo AI (weak deployer workflows) |

| Large enterprise with existing GRC investment | ServiceNow add-on + Credo AI or Holistic AI for AI-specific work | Dataiku or Arthur AI | Relying on GRC add-on alone |

| MedTech / medical device manufacturer | Holistic AI + existing QMS | IBM OpenScale for clinical AI monitoring | Tools with no MDR awareness |

| Small AI startup (<50 employees) | Holistic AI (more accessible) or structured manual templates | MLflow + open-source bias monitoring plugins | Enterprise GRC platforms — overkill and overpriced |

Special Case: Financial Entities Managing DORA and EU AI Act Together

For banks, insurers, and investment firms, the EU AI Act compliance problem has a specific shape that none of the pure-play AI governance tools address well. The ICT third-party provider data you maintain for the DORA Register of Information is the same data you need to identify AI providers and assess their EU AI Act compliance status. A credit-scoring API vendor appears in your DORA RoI as an ICT provider. Under the EU AI Act, that same vendor is a provider of a high-risk AI system, and you as the deploying bank have deployer obligations on top of your DORA third-party risk obligations.

Managing this in two separate platforms means maintaining overlapping provider data twice, with no structural link between the DORA compliance view and the AI Act compliance view. Every tool comparison on the market misses this connection — because the tools were not built with it in mind.

| Compliance task | DORA obligation | EU AI Act overlap |

|---|---|---|

| Third-party provider register | RoI — all ICT third-party providers documented with B-table relational structure | Subset of ICT providers are also AI system providers — same entity, additional AI Act conformity and risk-tier data to track |

| Contractual requirements | DORA Article 30 mandatory ICT contract clauses | EU AI Act Article 25 deployer rights — AI documentation access, instructions for use, incident cooperation |

| Incident reporting | Major ICT incidents to NCA under DORA Article 19 | Serious AI incidents to market surveillance authority — overlapping trigger events with DORA incidents for AI-powered ICT services |

| Ongoing monitoring | ICT service performance and resilience monitoring | AI accuracy, bias, and drift monitoring — monitoring the same vendor service from two regulatory angles simultaneously |

Questions to Ask Before You Buy

On classification

On documentation

On regulatory integration and data residency

DORA and EU AI Act in one platform. EU-hosted as standard.

Venvera connects your DORA Register of Information with EU AI Act deployer compliance — so ICT provider data and AI provider compliance status live in the same record, not in two separate tools you cross-reference manually. Amsterdam data residency as standard, no configuration required.

See Venvera at Venvera.comFrequently Asked Questions

Can we manage EU AI Act compliance in Excel?

For one or two AI systems, a structured spreadsheet can work as a starting point for inventory and basic documentation. It breaks down quickly once you have multiple systems, multiple owners, any need to demonstrate compliance to an auditor, or any requirement to version documentation over time. The structural problems — no referential integrity, no validation, no audit trail, no completeness checking — are identical to the problems that make DORA Register of Information management in Excel unreliable.

Should we wait for the market to mature before buying?

The risk of waiting is that August 2026 arrives with no compliance infrastructure in place. Building a compliant AI inventory, classification record, and technical documentation set takes 12 to 18 months even with good tooling. Organisations that delay tooling decisions will reach 2026 with incomplete compliance work regardless of what the software market looks like by then. Start with the minimum tooling needed to run the classification and inventory workstream now, and plan for tooling upgrades as requirements become clearer from early supervisory activity.

We already use OneTrust for GDPR — can we use it for the AI Act?

OneTrust is useful for the GDPR-AI Act intersection, particularly privacy impact assessments for AI systems processing personal data. For the AI Act's core obligations — Annex III classification logic, Annex IV structured documentation, conformity assessment workflow — its functionality is limited. If you have a small number of clearly classifiable systems, OneTrust's AI Act module may be adequate. For regulated financial entities managing complex AI portfolios alongside DORA, the gaps are significant and a purpose-built tool alongside OneTrust is typically necessary.

Do we need MLOps integration in our compliance platform?

Useful but not essential for most organisations. The main benefit is that performance metrics and model metadata can flow into technical documentation automatically rather than being entered manually, which reduces documentation effort and improves accuracy. Credo AI has the best developer integrations currently available. If your AI development team and compliance team are separated organisationally, the integration benefit decreases because the data transfer happens through process anyway, and the compliance platform's governance capability matters more than its API connectivity.

Written by the Venvera compliance team. Tool capabilities and pricing in this market change rapidly — verify current features directly with vendors before purchasing. This article reflects the market as of February 2026 and does not constitute a commercial endorsement of any named product. Last updated: February 2026.